More than a decade ago, POWER published a landmark special report, “Information Technology for Powerplant Management,” that documented how plants were beginning to use powerful software applications to improve their performance and economics. At the time, the phrase “islands of automation” was popular. It described how each function—process optimization, computerized maintenance management, performance monitoring, and distributed control system—seemed isolated from the others. The report also suggested that, “today the objective is to integrate IT components into an information engine.”

Ten years later, is the industry meeting that goal?

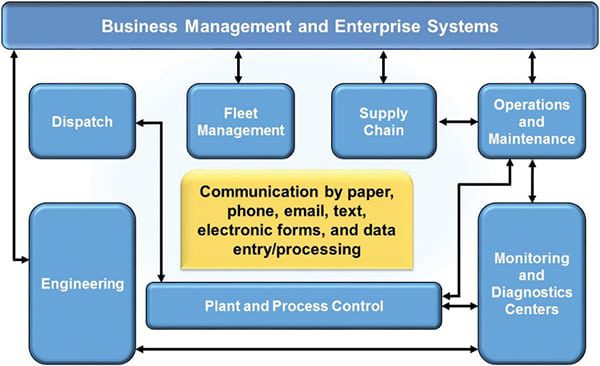

Original research conducted by the author’s firm for multiple clients over the past few months now offers at least a partial answer. The “islands” are indeed moving closer together, and some are even adjacent. But at most plants, they still work independently, rather than as an integrated knowledge management system.

Pearl Street believes its research is groundbreaking because

- It focuses only on power generation facilities,

- Software suppliers rarely monitor how their products perform after their implementation team leaves the customer’s site, and

- Software performance is usually reported piecemeal, plant by plant, and not as industry trends.

As always, we encourage readers to participate in the debate.

Pervasive, but still piecemeal

To give you an idea of which applications are involved, let’s characterize a typical diagnostics and monitoring center for a fleet of plants that all use the same distributed control system (DCS). Following is a list of the center’s systems and functions:

- A plant data repository and historian

- A corporate, business-level system

- An interface between the corporate and plant systems

- Process diagnostic and predictive analytic packages

- Thermal performance (heat rate) monitoring

- Tracking of fuel variables, including cost

- A process-optimizing neural network

- Work, task, and maintenance management

- Tracking of the capacity and availability history of units, with an interface to the independent system operator that dispatches them

- Control of NOx emissions

- Electronic notification and alerts

Most plants that responded to our requests for information said they deploy some or most of these applications in some form, although with different overall strategies. For example, one fleet describes its data management strategy as equipment-centric. Some owner/operators outsource plant monitoring to a third party. There’s also variety in how integrated plant software is with its owner’s corporate business system.

Research methodology

Our research included visits to several plants of different types and sizes, conversations with fleet monitoring and diagnostic managers, round-robin discussions during a plant managers’ workshop at the ELECTRIC POWER 2007 Conference & Exhibition, ad hoc discussions with attendees of a recent HRSG Users’ Group meeting, and telephone interviews. To the extent possible, we asked plant and fleet managers and staff similar questions.

It is often insightful, though perilous, to draw big conclusions from small data sets. Nevertheless, they are offered here in the spirit of facilitating an industry-level discussion on this critical, but often neglected, area of plant management.

What owners like

An unequivocal conclusion is that owner/operators are unanimously satisfied with their data repository and historian software applications. All are essentially using one product that has almost become a de facto standard at power stations. Users also report being very satisfied with their predictive analytics package, which uses intense data-crunching algorithms that predict where problems are likely to occur.

What many users don’t care for, however, are their work and task management programs. One theory to explain this common distaste, validated through two fleet managers, goes like this: Because task management software requires many users to input data, it must impose rigid rules on the process, and those rules frustrate users. By contrast, visualization or analytical software engines pull data from the automation system and present them to the user. As a result, work/task management and visualization/analysis represent two different functions to users because their interfaces are different.

Most other programs don’t generate a strong reaction either way, if owner/operators’ responses are viewed in the aggregate.

The most-cited software challenge, say users, is the friendliness of interfaces. Most power plant software doesn’t measure up in that regard, they add. Another issue, noted by more than one user, is version control, which is “annoying and expensive” because people have to be repeatedly retrained on newer versions. One respondent summed up the problem tersely: “When software is easy to use, it gets used; when it isn’t, it doesn’t.”

Another interesting distinction made by users is between software that does something and software that propagates and presents. For example, predictive analytics, thermal performance monitoring, and process optimization are three generic functions that are implemented in the same way. Data are extracted and put into the application, calculations are performed, a new piece of information is generated, and action may be taken based on it. By contrast, other software packages take data and present/organize them differently or better, and propagate them further, for wider use throughout the organization.

Following the mother ship

Ten years ago, many in the industry realized that sophisticated IT systems could allow plants to become more autonomous. At that time, it was widely believed that most plants in portfolios would control their own profits and losses while delivering revenue to their corporate owner. However, the research suggests that plants have become less, rather than more, autonomous.

It appears that corporate IT and central engineering, both corporate functions, are increasingly driving software decisions that affect the plants—even if there is, as one respondent put it, “good segregation between business systems and plant systems.” One manager responsible for multiple plants noted that “all plant systems must now integrate with the corporate business system,” which he referred to as the “mother ship.”

There’s an undercurrent to this trend, however. As owner/operators have embraced fleet monitoring and diagnostics (a centralized strategy by nature), plant engineering staffs and expertise have been hollowed out. Some plant managers complain that centralizing engineering makes it harder for them to fully realize the plant performance gains that software makes possible. That’s perhaps inevitable, because one size rarely fits all in today’s world of plants with different designs, configurations, and equipment, operated by staffers with different skill sets. The tension between centralized and decentralized management is palpable and constant, with the former seeming to have the upper hand at present.

Initial vs. final costs

Getting a handle on software costs is always a challenge. IT projects are rarely defined in enough detail at their inception to make estimates of their cost more than wild guesstimates. Usually, “mission creep” sets in to make deployments far more expansive than originally planned. The research has confirmed a rule of thumb, often quoted by software professionals, regarding expenses: the final cost of a software implementation is around five to seven times the cost of the software itself.

Asked to quantify this cost ratio based on their experience, power plant owner/operators cited ranges from 1-2x to 5-10x. One plant manager said it could be as high as 20x, depending on how you define “fully implemented.” Representing the other end of the range was one fleet manager, who pegged the cost ratio at 1-2x because his plant “only used the vendor’s people when they needed them” and instead relied on in-house staff.

A prerequisite for keeping costs in check, meeting project schedules, and maintaining continuity of software use at the plant after a new deployment is having the right team in place. More than one owner/operator stressed that success requires at least three elements: dedicated champion(s) at the plant to guide the project, staff or corporate person(s) intimately familiar with the software, and the vendor’s experts. One user complained that its new process optimization software is troublesome because the supplier limits access to the package’s source code. He added that the supplier of the package it replaced was more open about how the software does what it does.

The modern DCS: A fifth wheel?

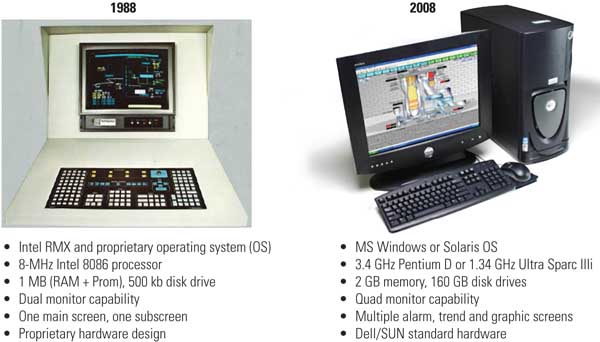

One of the more intriguing discoveries of the research (and one that calls for further investigation) is the relationship between the plant DCS and all of these separate applications. If you visit the web sites of the major power plant DCS providers, you’ll realize that most of their systems duplicate (or at least attempt to duplicate) the capabilities and functionality of the diverse software packages available from non-DCS vendors.

To understand the importance of this issue, consider the software complement in a desktop PC. It comes loaded with a suite of applications from many suppliers. But the vast majority of users only employ a small fraction of the suite’s capabilities. One reason for that is redundant functionality. As one interviewee recognized, “Although Microsoft includes anti-virus software in its suite, most everyone still uses Norton for protection.”

Another respondent shed light on the compatibility consequences of using multiple applications. He reported that although the DCS recently retrofit to one of his plant’s units includes a data historian, the DCS still was configured to communicate with the historian used by other plants of the fleet, for consistency’s sake. Similarly, a DCS may include a work management (WM) application, but it still must interface to the legacy WM package that other plants use. The need to remain backward-compatible with existing applications is a big reason why software supplied with a DCS often isn’t used to its full extent.

A decade ago, it was generally assumed, for good reason, that most if not all of the functionality of emerging applications would become embedded in DCS offerings. The DCS could then serve as a plant’s common knowledge management “platform.” Today, however, data from the DCS still are exported through standard communication protocols, usually via the plant historian. In most cases, all “layered” software functions for managing data and information and sharing them throughout the organization are fed by the historian.

These integration and compatibility issues spawn a variety of interesting considerations and questions that deserve to be addressed and answered:

- Surely part of the reason why software applications have become layered has been distributed control systems’ fitful migration away from proprietary designs and toward open architectures. Indeed, almost all modern software packages are developed for open, PC-based environments.

- At most plants, the existing data historian enjoys a “first-mover advantage.” Fleet owners have standardized on one system and made it the interface between the DCS and other software applications, and plant systems and the corporate mainframe. One user described this link as the plant’s “communications conduit.”

- Most recent DCS deployments have included the installation of functionality that may already exist, increasing final project costs unnecessarily. Much of the redundant functionality comes from applications layered outside the DCS.

- Is there unused capability in a plant’s existing system that could be exploited? One respondent said yes, vaguely quantifying it as “massive.” This is another piece of evidence to support the validity of the desktop PC analogy.

- One fleet center manager reported that his company has spent $200 million to upgrade the distributed control systems of its centrally monitored plants. But he also said that another $10 million has been spent on software applications layered around them for the remote diagnostics centers.

The last point raises some philosophical questions. DCS retrofits often include the installation of lots of new hardware, such as sensors and transmitters. After subtracting their costs from the project budget, a large cost, and therefore value disparity, remains between the DCS and external software functionality. How should these two values be apportioned? In other industries, knowledge about an asset has increased in value relative to the value of the asset itself. Will that be the case for power plants?

During the research interviews, one fleet manager spoke at length of the “unintended consequences” of moving to a PC platform. One is that PCs don’t work very well in real-time environments. Another is that, despite automatic updates, it’s still extremely time-consuming to download all the security patches needed to protect PCs from new and more-pernicious viruses and cyber-intrusion schemes that hackers cook up worldwide on a daily basis.

Another unintended consequence of the move to open, PC-based architectures is being felt in remote alarm management and monitoring. One fleet manager reported that 35% to 40% of the alarms sounded at his plants are “system” alarms—malfunctions of the control system, rather than of plant equipment. Lately, there have been more alarms of greater diversity, he added.

Based entirely on the research results, the value of integrated knowledge management has plenty of opportunities for growth. It will be interesting to see whether power plants being planned and built today will consider their knowledge management system—comprising the DCS and all of the functional software needed to economically optimize plant operations and performance—an “island” in the way that plants a decade ago considered their boilers and turbines islands for contractual purposes. At a practical level, will new plant owners make one contractor responsible for integrating all of the software functionality within their plant into a logical and optimized whole?

Point sources

Quotations from owner/operators of different types of plants contacted as part of the research project provided interesting anecdotal information. Astute readers may be able to distill some trends from these anecdotes:

- “No one has written a CMMS [computerized maintenance management system] program to fit a combined-cycle plant.” The respondent’s plant is now suffering through the fifth revision of its CMMS software.

- “The best plant engineers and operators go to the M&D [monitoring and diagnostics] center.” The respondent called the personnel migration a “key factor” in the center’s success, in part because it overcame the remaining plant staffers’ initial, “big brother” attitude toward the project.

- “We could do lots of performance monitoring in the DCS, but our approach is to do it through the data historian,” said the manager of a fleet of combined-cycle plants.

- “Corporate IT runs everything. All plant software has to be blessed by IT”—the manager of another combined-cycle fleet.

- “We want to incentivize our [operators] to reduce plant costs by correlating them with financial losses avoided by our predictive analytics package”—the manager of a coal-fired fleet.

- “Our knowledge management system helps us manage staff reductions by capturing expertise”—the manager of another coal-fired fleet.

- “The impact of fuel characteristics is a new variable for our operators”—a staffer at a plant that recently switched to Powder River Basin coal.

Final thoughts

Clearly, the industry goal stated in the POWER article a decade ago—integrating diverse plant IT software and systems into a versatile and powerful knowledge management platform—remains elusive. Too many pieces are still missing. Among them are the ability to analyze and handle fuel in real time and a way to optimize the operation of pollution control devices within a plant’s overall process control, automation, and optimization scheme. Unfortunately, it will take another 10 years to determine whether the next generation of power plants being designed and built today delivers more of the considerable promise of integrated knowledge management.

—Jason Makansi (jmakansi@pearlstreetinc.com) is president of Pearl Street Inc., a technology deployment services firm.