As artificial intelligence (AI) training reshapes data center power system design, early adopters using battery energy storage systems (BESS), microgrid control, and unified automation are positioning themselves as viable grid participants, while reducing operational costs and improving reliability.

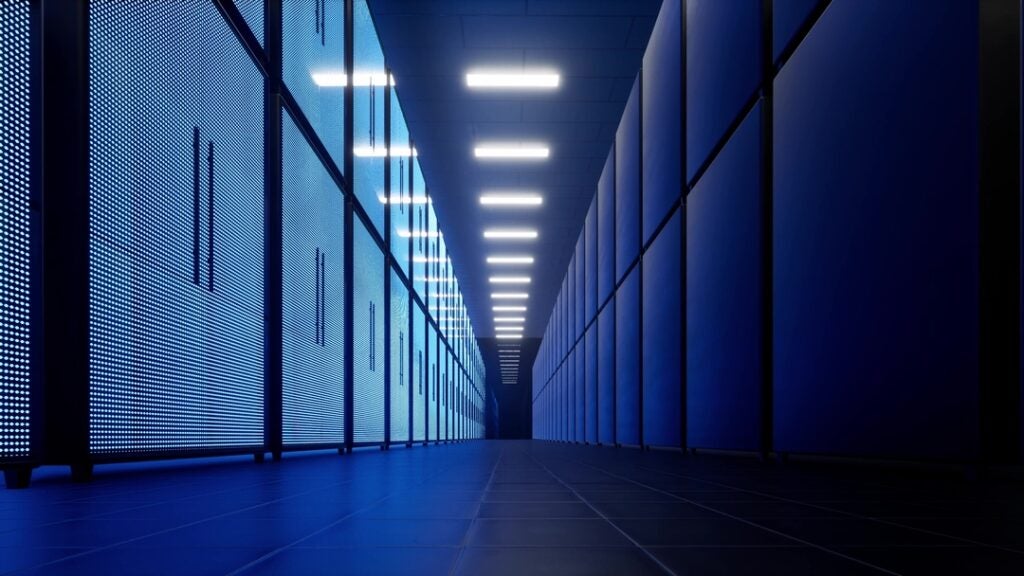

The data centers built to train increasingly competent artificial intelligence (AI) models are powerhouses of their own. These facilities leverage banks of hardware like graphical processing units (GPUs) and tensor processing units (TPUs) to process tremendously large amounts of data.

As they process data, these GPUs, TPUs, and their associated support systems—heating, ventilation, and air conditioning (HVAC); server cooling systems; and more—also consume massive, but unpredictable, amounts of power. Large numbers of processors switch on and off in unison, creating instantaneous swings of hundreds of megawatts (MW) at a time. These swings are so severe that they can destabilize both onsite islanded power generation or, more concerningly, any power grid to which they are connected.

AI training loads are massive, fast-changing, and unlike anything utilities have historically served, and the facilities that run these loads are fundamentally different from traditional data centers. They also operate differently from other massive power consumers utilities have historically supported.

For example, a site operating a large arc furnace also draws tremendous amounts of power. However, the power consumption of an arc furnace or industrial motor is staggered and predictable. A facility operating such equipment can notify the utility they plan to bring the unit online, and the utility’s operators can prepare for the load swing. The load ramp-ups are also not as instantaneous as data centers handling AI training, and they stay on continuously for an expected period, making them far easier to anticipate and manage.

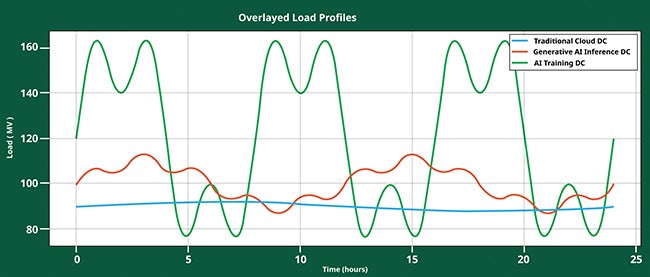

In contrast, processors in an AI training facility ramp up together, draw enormous power, then drop off together just as suddenly as they came on. The result is frequency spikes, generation load spikes, and mechanical stress on the turbines often used to provide power to these facilities. Large deviations in frequency and voltage can trigger protective action from the electrical relays that can trip and cut off power to the data center loads. If the facility is islanded, such a trip only impacts the data center, but with power reliability for these facilities being critical, this is a major impact. If the center is connected to the utility, then it can have broader impacts, including destabilizing the grid (Figure 1).

|

|

1. Various types of data centers (DCs) can have drastically different load profiles. Courtesy: Emerson |

The Grid Reacts

As a result of these massive, unpredictable loads, AI training data centers cannot simply connect to the grid, as this could create the potential for risking widespread outages that would result from the frequency swings that even the system inertia is not strong enough to overcome. In traditional grid architectures, protection relays trip when they detect current shortages, overages, or frequency events. In the worst-case scenarios, a single AI training center could trigger cascading relay trips that could impact other parts of the grid. Those cascading trips could mean power outages for thousands of residential and business customers also connected to the grid.

Obviously, such a scenario is not tenable, and utilities and the states they operate in have taken notice. Recently, groups like the Electric Reliability Council of Texas (ERCOT)—the organization that manages most of the electric grid in Texas—have begun implementing legislation to prevent outages caused by AI training data centers. New laws like Texas Senate Bill 6 (SB 6) require large power consumers like AI data centers to include buffering to stabilize the energy they take from or put into the grid. But even in areas where there is no regulation, utilities are refusing connection requests for AI training data centers because the grid simply cannot handle their load swings.

Islanded Power Is Not a Long-Term Solution

Today, many new large hyperscale AI training data centers are being built with islanded power plants supplying their energy. Whether they are managed by the data center’s head organization or an external service provider, these generation facilities are often constructed near the data center to provide a direct, uninterrupted source of private power to operate the large and complex loads of AI training.

Typically, these islanded facilities consist of a wide array of gas turbines. Building out an onsite power facility provides organizations with a strategy to get expensive GPU and TPU technology online quickly, while avoiding interconnection delays that might leave expensive racks idle.

However, islanded generation is expensive, and fuel costs are high. Because their core competency is training AI rather than generating power, many data center power plants are not planning effectively to allow them to purchase fuel cost-effectively. Moreover, that same lack of power plant expertise means data center operators can rarely drive the efficiency of operation that a utility can accomplish.

In addition to all these challenges, processing load swings frequently cause high-cycle fatigue on the turbines themselves, leading to costly repairs and/or unplanned downtime that can disrupt the required 99.99% uptime AI training sites strive to achieve. Ultimately, islanded power is only a stopgap. AI training data centers will eventually need the efficient, cost-effective power provided by connection to a utility.

Battery Storage Provides Stabilization

AI training data centers would much prefer grid power for cost, reliability, and scalability. Today, however, interconnection timelines are extremely long. Most utilities do not have sufficient capacity and infrastructure to satisfy and support the load of hyperscale data centers. In addition, the data centers themselves play a role in the complexity because they need to provide a load profile that will not destabilize the grid. Both elements, producer and consumer of power, must be in place before data centers can be connected.

One way data center operators are meeting this challenge is via their battery energy storage systems (BESS). While small data centers often have an uninterruptible power supply (UPS) array for facility power backup, the need for a BESS is unique to hyperscalers performing AI training.

A BESS can act as a shock absorber to make AI training loads more grid compatible. First, and most importantly, a properly engineered and configured BESS can deliver power to alleviate the instantaneous load spikes of processor arrays because the excess load required when the processors ramp up is fed by the BESS instead of the grid. Conversely, excess generation (or suddenly reduced load) can be used to recharge the batteries. The load profile to the grid is more even because the BESS manages excess demand and generation, instead of passing it along.

This ability to use excess generation and demand to balance loads is one of the most practical ways to meet emerging regulatory requirements and convince utilities that connectivity is viable. Accomplishing this goal, however, is not as simple as installing battery arrays and hoping for the best. A successful load-balancing BESS application must be carefully orchestrated (Figure 2).

|

|

2. Efficient automation is essential to successfully managing a load-balancing battery energy storage system (BESS). Courtesy: Emerson |

Supervisory Control Provides Stability

Stabilizing an AI training data center requires microgrid architecture with unified supervisory control. Today’s large power islands primarily consist of gas turbines, but some include solar, wind, BESS, or other generation assets. Many of these sources have variable production rates and changing loads. If demand exceeds generation—for example, if 22 MW are required and only 20 MW are available—the system must be able to instantly shed noncritical loads, or dispatch fast responding assets to maintain balance.

However, the ability to instantaneously balance loads and manage control is complicated when operators must navigate a wide array of original equipment manufacturer (OEM) control systems on each generation asset. Millisecond decision-making is critical for balance, because the amount of energy that can move in a cycle is very large, enough to trigger relays and upstream devices to trip larger portions of the system offline. Human operators cannot make such decisions and changes fast enough without assistance from automation.

A supervisory control and data acquisition (SCADA) system built specifically for power operations can bring together varying control architectures across a site and present them to operators as a consolidated operating environment for a common look and feel over the entire fleet of generating resources. Operators can designate the power they need through the SCADA system software’s single interface, and, using direct connections to the individual control systems on each asset, the SCADA system will act to supply power using the best available combination of sources, dramatically simplifying operations and stabilizing loads. This empowers operators to manage fewer disparate systems, resulting in higher reliability, easier redundancy, and simpler operations.

Planning Is Key

Long-term reliability requires both real-time control and forward-looking asset planning. Operators must forecast load, renewables output, turbine availability, and more to make the best, most cost-effective decisions day to day. For an AI training data center that requires 99.99% uptime, tracking the variables necessary for such planning cannot be accomplished on spreadsheets. Teams instead need generation management software seamlessly integrated with the SCADA system to schedule effectively.

Generation management solutions help operators forecast and create a schedule of what is needed to meet optimal operational requirements. They can determine which assets need to run, for how long, and in what sequence to provide the best results based on anticipated demand and external environmental factors. This ability to predict and plan not only improves operations and readies the facility for grid connection, it also helps teams immediately reduce costs for their islanded generation by allowing them to purchase fuel days ahead of time based on predicted need, saving significant cost over the spot market.

Ready for Today, and Tomorrow

AI training data centers represent a massive shift in power system and data center designs, and the grid is not yet ready. The loads these sites generate are orders of magnitude more dynamic than anything that has preceded them, and grid operators, regulators, and data center developers are all adapting in real time.

The industry is growing fast and extremely competitive, so real-time adaptation continues to make sense, even when it comes with higher costs. However, those higher costs will not be sustainable long term. Islanded operation is a temporary necessity, not a long-term strategy.

Therefore, the industry must rethink interconnection standards, buffering requirements, and microgrid integration. Those that do so today—using BESS, microgrid control, generation management software, and unified supervisory automation—are preparing for increased success, achieving 99.99% uptime, and becoming viable grid participants, while simultaneously driving down costs to capture competitive advantage.

—Brett Benson is the director of global renewable solutions business development for Emerson’s Power and Water Solutions business, and TJ Surbella is a director at Emerson’s Aspen Technology business.