By installing optimal components, finetuning utility processes, and partnering with the right suppliers, hyperscalers are improving data center cooling loop performance, which is increasing operational uptime, equipment safety, facility reliability, sustainability, and profitability.

As artificial intelligence (AI) becomes an increasing staple in daily routines, data centers today are undergoing significant architectural shifts. Driven by the demand for AI applications, machine learning, and high-performance computing (HPC) in both professional and consumer circles, the need for computational power is growing at an unprecedented rate. Today’s advanced chips enable these increased workloads, but they also draw significantly more power than their predecessors, fundamentally altering the thermal dynamics of the white spaces in server halls.

This is in turn driving the need for significant increases in power generation, both onsite and from utilities. In fact, the International Energy Agency (IEA) projects data center power needs in the U.S. to triple from 2024 levels by 2030 to meet increased AI workload demands. Globally, electricity consumption by data centers is expected to surpass 950 TWh annually, up from about 450 TWh in 2024.

Cooling accounts for a significant share of data center power use, which has led facility engineers and operators to prioritize more efficient ways to dissipate the immense heat generated by high-powered computing components. This is driving substantial facility infrastructure reconfigurations, with the transition from air to liquid cooling among the most prominent.

As collaborators with hyperscalers, leading industrial instrumentation suppliers see firsthand the growing need to balance peak computational performance with ambitious sustainability and energy-efficiency targets—particularly given the enormous power demands of these facilities. While liquid cooling offers superior heat-transfer performance, it can also introduce new process-control complexities.

High-Density Computing Challenges

Air cooling was historically used to manage the thermal output of enterprise-scale information technology (IT) infrastructure, consisting of computer room air conditioning (CRAC) units and large fans circulating conditioned air across server racks. However, today’s higher performing chips also exhibit similarly increased heat flux densities, beyond air cooling’s useful range of mitigation.

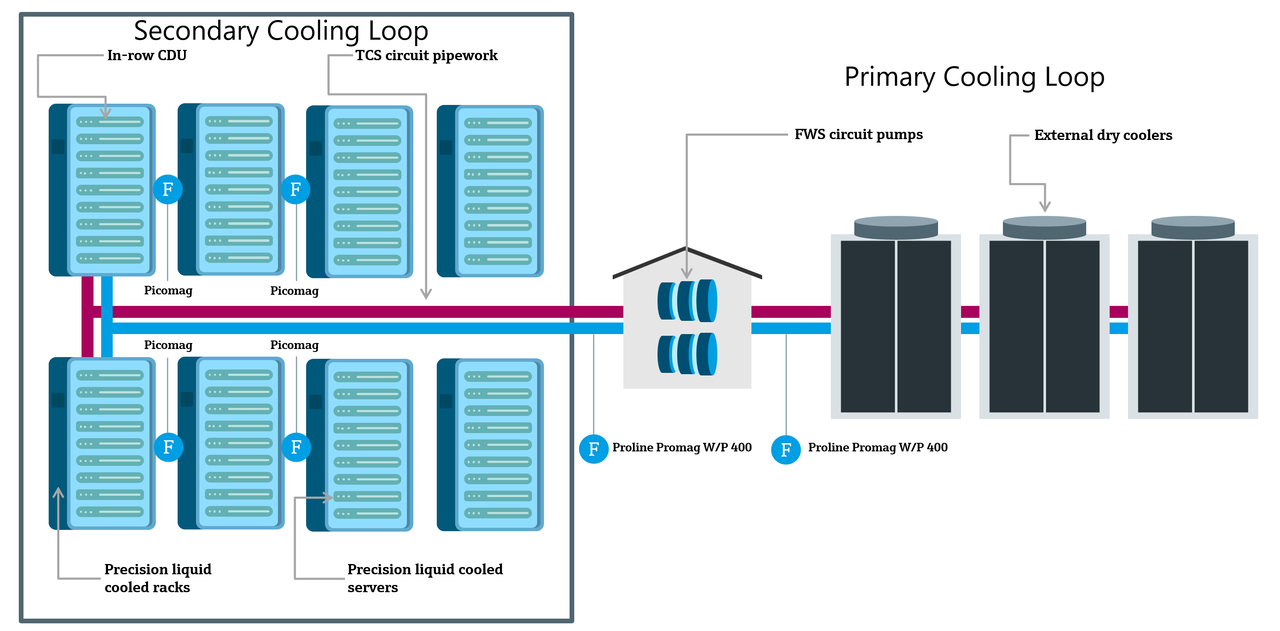

This prompted the transition to cooling with liquid mediums—typically highly purified water or water-glycol mixtures—because liquids absorb and transport heat far more effectively than air due to their higher specific heat capacity. In addition to handling increased thermal output from higher power chips, these liquid systems can effectively cool greater server rack densities in smaller footprints. Yet, circulating liquid near multi-billion-dollar IT equipment requires considerable engineering care regarding the process (Figure 1).

Hyperscalers face several daily operating challenges relating to instrumentation and measurement accuracy. The first is a commitment to extremely high availability in service level agreements with end users, so there is zero margin for thermal management failure. An underperforming cooling loop can cause substantial hardware damage and quickly result in facility downtime, hence maintaining precision cooling for uptime requires industrial-grade reliability from every instrument and control element in the process.

Second, space inside server rooms is a premium commodity given the rising demand for HPC and AI applications. As a result, cooling distribution units (CDUs) and rack-level cooling skids are increasingly tightly packed among a growing volume of servers to maximize space available for computing components. This often requires routing pipes through narrow and twisting corridors, leaving little room for straight pipe runs that many flowmeters need to make accurate measurements.

And finally, facility performance requirements specify the capabilities of today’s most accurate and advanced instruments, while also conforming with the need for wireless/Bluetooth-deactivated communication inside white spaces to maintain maximum cybersecurity in these sensitive environments. Therefore, there is just a small sweet spot of compliant devices that also maintain top-level digital diagnostic capabilities to maximize efficiency and reliability.

Modern Instrumentation Increases Efficiency and Capabilities in IT Spaces

Operating high-efficiency dual-loop cooling systems requires a robust array of modern instruments. To both safeguard infrastructure and optimize thermal setpoints and energy consumption, facilities are increasingly investing in industrial-grade technology to replace commercial-grade devices, which are typically less accurate and more prone to failure.

Overcome Spatial Constraints with 0xDN Flow Measurement

Flow monitoring is critical for maintaining efficient thermal transfer and water usage effectiveness (WUE), which is the ratio of cooling water used to total energy consumed by IT equipment. However, most flowmeters traditionally deployed in liquid cooling environments require significant lengths of straight pipe upstream and downstream—often five to 10 pipe diameters—of the sensor to condition the fluid and ensure a uniform flow profile for accurate measurement. In the dense and space-constrained architectures of modern data centers, providing these straight runs is inconvenient and often impractical.

To overcome this limitation, leading suppliers offer advanced full-bore electromagnetic flowmeters with 0xDN (zero diameter) capabilities, meaning no installation restrictions up or downstream of the flowmeter. This is made possible with advanced measuring tube designs, multiple electrodes in the sensor, and sophisticated signal processing algorithms, enabling 0xDN technology to provide precise flow measurements regardless of flow profile distortion (Figure 2).

This significantly increases process flexibility, allowing installation immediately downstream of a 90-degree elbow, a pump, or a control valve. It also empowers mechanical designers to further reduce the footprint of cooling skids without sacrificing measurement integrity, providing more space dedicated to the high-value IT components that drive profitability.

Simplify Installation and Increase Flexibility with Non-Invasive Temperature Measurement

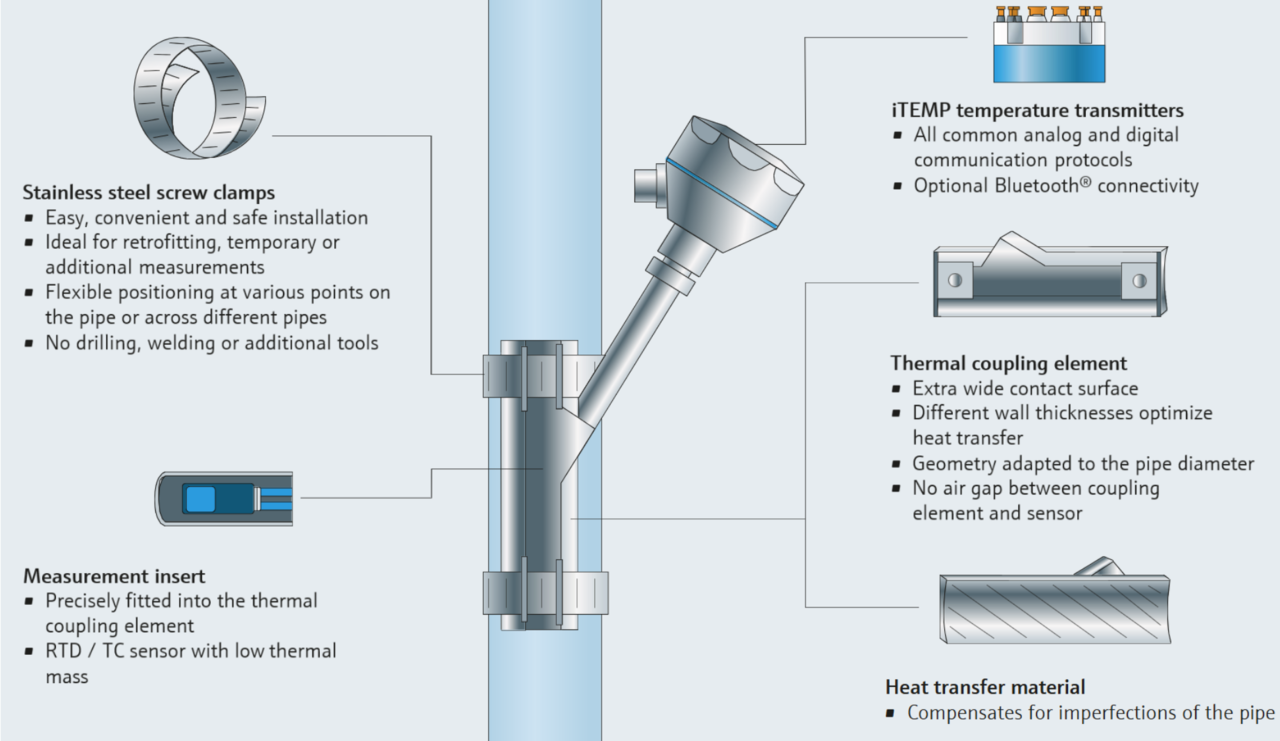

Temperature measurement is the other bedrock of reliable cooling, providing monitoring nodes around the entire process network. Traditionally, precise temperature measurement required installing thermowells directly into process pipes, and while effective, invasive thermowells create flow disturbances, which force pumps to work harder and consume more energy to overcome pressure drops. Furthermore, every pipe penetration introduces a potential leak or contamination point, and thermowells cannot be tapped into live process streams, so downtime is required.

However, material science advancements have yielded highly accurate non-invasive surface temperature sensors. While most clamp-on temperature instruments require electronic compensation—adding processing time and slowing down the measurement substantially—to adjust the measured value due to ambient effects, today’s leading surface options have addressed this challenge using insulation only. With a mechanical clamp-on design and advanced thermal coupling elements, these modern devices measure heat directly through the pipe wall without requiring electronic compensation (Figure 3).

This provides response times and accuracy comparable to invasive installations, while eliminating the need for pipe penetration, which reduces pressure loss, speeds up installation during rapid facility scaleups, and simplifies the decision to add measurement nodes when the need arises.

Secure the Digital Backbone

Modern innovation requires a delicate balance between digital capabilities and cybersecurity considerations. While wireless communication technologies like Bluetooth offer convenience for commissioning, maintenance, and monitoring in many industrial sectors, many data center cybersecurity policies require wireless-deactivated devices in IT white spaces. To support these sometimes-conflicting requirements, leading instrumentation suppliers provide dedicated data center/original equipment manufacturer (OEM)-configured compact devices specially designed for process measurements in server halls, without compromising on measurement or instrument diagnostic capabilities (Figure 4).

These variants are delivered from the factory with wireless connectivity deactivated at the hardware level, while offering reliable hardwired protocols for transmitting information, such as IO-Link or standard analog signals (4–20 mA). This provides data center facility operators and OEMs with secure, plug-and-play components that align with zero-trust wireless policies, while still delivering continuous flow, temperature, and conductivity data from a single compact node, tailormade for measuring process conditions in server room secondary cooling loops.

Scaling Resiliency and Efficiency

In addition to the reliability benefits gleaned by standardizing the use of industrial-grade process instrumentation, hyperscalers can also reduce power consumption by running their facility conditioning systems closer to required thermal specifications. The increased accuracy means tighter temperature and flow measurement error bands, reducing the need for conservative over-cooling, which is required when using less reliable instruments to create an equipment protection safety buffer. By cooling less while confidently maintaining required specifications, operators reduce the workload on primary chiller lines and pumps, decreasing overall facility energy consumption, utility costs, and carbon footprint. This is critical because the largest operating cost for data centers is the power required for cooling.

Furthermore, modern instruments equipped with integrated process and device diagnostic capabilities empower maintenance teams to shift from reactive troubleshooting to predictive maintenance practices. Built-in continuous health assessment capabilities prompt early detection of anomalies—such as pump cavitation, scaling, or blockages—long before they result in gross inefficiencies, cooling system failure, or IT equipment downtime.

Beyond the technology itself, rapid data center deployment requires robust supply chains and manufacturing agility. Suppliers must not only reliably produce high-quality instrumentation, but they must also fulfill orders promptly and deliver at scale. When seeking out these sources, hyperscalers should evaluate qualities like supplier investments in localized manufacturing, ability to consistently meet high-volume orders with small lead times, and capacity to provide engineering expertise beyond a physical product.

Advent of Industrialized IT

Today’s high-density computing at scale is blurring the lines between sensitive IT infrastructure and heavy industrial processes. As computing application demand increases, chips become more powerful and produce additional heat, and data center equipment is pushed to its limits, capable thermal management systems are more critical than ever to reduce power demands and increase uptime.

Hyperscalers standardizing on industrial-grade instrumentation are substantially enhancing system effectiveness by leveraging 0xDN flow technology, non-invasive temperature sensing, and cybersecure digital integration—empowering them to drive performance a step further. These capabilities help facility operators mitigate risk, improve cooling and energy efficiency, and strengthen the information backbone powering the modern digital economy.

—Cory Marcon is the power and energy industry marketing manager for Endress+Hauser USA, and Lauton Rushford is a national marketing manager for flow products at Endress+Hauser USA.