COMMENTARY

Stories about seismic changes ready to hit utilities and transform their century-old business models fill power industry newsletters and media. I’ve even contributed some of these articles. But rarely do I see any purveyors of industry news provide a truly comprehensive roadmap to enable utilities’ leadership, regulators, and myriad stakeholders to concretely think about new models and how to build them. That all changed with the arrival of “Navigating Utility Business Model Reform,” a report by the Rocky Mountain Institute (RMI).

What’s striking about the RMI report is how the success of these grid modernization models will rely on collection, processing, and analyzing data in very different ways. The report examines 10 reform items including revenue decoupling, platform revenues, performance-incentive mechanisms, and multiyear rate plans, among others. But let me draw your attention to their especially helpful case studies, which describe how utilities can better align their business goals with customer preferences and public policy objectives.

I found the discussion on the large number of utilities nationwide launching new grid modernization initiatives especially interesting. The report suggests that now is the ideal time to address utility regulation and business model shortcomings. With volumetric utility sales likely to fall further in the future now, especially if solar and wind power become the backbone of our electricity system, utilities must invest in modern technologies that make the grid more efficient, flexible, affordable and resilient.

Among the case studies in this report is one focused on Ameren Illinois, an electric utility serving 1.4 million customers. This case illustrates a regulator’s policy goal to improve utilities’ energy efficiency by providing them with incentives for reaching efficiency targets. Ameren has been participating in a state regulator-mandated “NextGrid” study to assess grid modernization initiatives in Illinois.

Dancing to a New Algorithm

Ameren has been participating in a state regulator-mandated “NextGrid” study to assess grid modernization initiatives in Illinois. Although not included in the RMI report, one objective of the study was to evaluate the way in which the state’s electric utilities could incorporate more Distributed Energy Resources (DER), and the effect these additional grid assets would have on rates and revenue models.

One of the key factors that would help assess the value and siting of DER is more accurate and locational load forecasting, including forecasting locational system losses and Distributed Unaccounted for Energy (DUFE). This analysis would require Ameren Illinois to collect much more granular temporal and spatial data—not only from DER generation assets, but also from millions of other grid assets that were continually changing in response to DER adoption and energy efficiency programs.

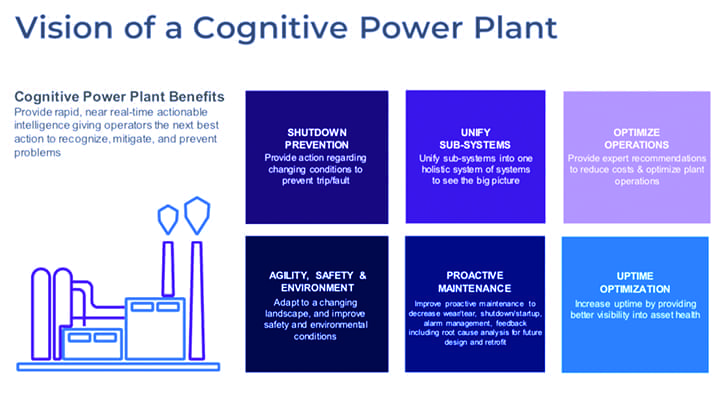

With machine learning and self-tuning algorithms now available, Ameren will be able to perform loss calculations on every single feeder circuit on the distribution system to get a true accounting of where all the kilowatt-hours are going—not just by system voltage class, but by individual circuits as well. Combining real time losses (RTL) with an asset-level micro forecasting engine creates a gateway to a host of strategic initiatives, including locational analysis of cost of service to determine the grid value of DER assets on every circuit at any time of the day. It is also step one toward creating future DER locational pricing models and a guide to determine where DER add value to the grid or discourage DER where it contributes marginal or no value to the grid. Additional near-term value will be created by enabling more accurate asset loading analysis, planning studies and cost-of-service studies using circuit-specific temporal data.

The new technology includes enterprise data platforms, like the PowerRunner Energy Platform, that join disparate IT and OT data to present real time actionable information to the enterprise. At the core of the platform are three key technologies: data virtualization; self-service analytics; and machine learning self-tuning algorithms. Disparate data can be combined, and business users can query and view data across multiple data sources. Through calculation and forecast engines in the software users can operate on data to determine the locational cost/value of energy and the next hour load requirements at every meter.

National Grid Partners’ vice president of corporate venture capital Pradeep Tagare made this important observation in a recent article: “For energy markets to function properly, these multi-dimensional supply and demand dynamics between buyers and sellers have to be processed in real time. Buyers and sellers also need ways to make reasonably accurate predictions about future market conditions in order to make fact-based operational and business decisions.”

Millions of Predictions in Seconds

Managing real-time transactional data from operational and commercial systems and joining it with forecast models created from historical data is the key to developing a platform that supports the new and very localized energy markets.As Tagare wrote: “Human decision-makers, of course, are still often involved in buying and selling strategies. But by making about 10 million AI-driven predictions about market supply and demand every minute.” AI, he believes, “gives those decision-makers the accurate, actionable insight they need into an increasingly complex and distributed energy marketplace. And someday AI may even be able to autonomously handle even more of the negotiation and energy arbitrage itself.”

The human input will support configuration and tuning of models as outliers are identified or new assets (DERs) are added to the circuit and new models are developed. Without the engineering and business acumen, the data scientist alone will not be able to perform these tasks. The power of the data has to be in the hands of the subject matter experts–the distribution engineers and other decision makers at the utility.

Streaming AI technology built on graphical processing units with optimized data processing and parallel computational threads can forecast the performance (the net generation output or energy consumption) of each asset (meter) in less than nine milliseconds. Even more remarkable is that this is not just a matter of rerunning a static model over and over to achieve these predictive performances. The system is re-evaluating the latest sensor data with hundreds of millions of historical and real-time points and retraining the system, on every run, in minutes. Antiquated prediction methods and inefficient training sessions could never apply given the operational timelines and dynamics. Practically, this means if the drivers (behavior, price, weather) of a distribution asset change minute-to minute, so do the prediction models.

AI is game-changing technology that transcends the energy industry. All kinds of businesses will be transformed as new tools of increasing sophistication are brought to market. Those of us in the energy business don’t have the luxury of sitting back and overlooking the data revolution.

As data becomes a critical driver in our industry, we need to adapt and to grow accordingly. We need to see data as the most significant catalyst shaping policy and regulation. The RMI report has identified what can be done with data and machine learning to support regulators and policy makers in grid modernization efforts. The report has pointed the way, and what can be done with data and data analytics. Machine learning is no longer in the realm of science fiction. ■