Developing a resilient organization pays benefits daily, but during outages and other maintenance tasks, it can account for the difference between success and failure.

In the January issue, I introduced the concepts of highly reliable organizing (HRO) and resilience engineering with a promise to address the remaining principles. As a quick review of the first article, you’ll recall that many quality and safety systems are designed around simple work, such as on assembly lines. However, processes aimed solely at minimizing variability are ineffective for the complex, highly variable work of operating and maintaining power plants.

HRO, as described in the book Managing the Unexpected, focuses on reducing failures through:

■ Preoccupation with failure

■ Reluctance to simplify

■ Sensitivity to operations

■ Commitment to resilience

■ Deference to expertise

Erik Hollnagel, one of the fathers of resilience engineering, defines resilience as “the intrinsic ability of a system to adjust its functioning prior to, during, or following events (changes, disturbances, and opportunities), and sustain required operations under both expected and unexpected conditions.”

A resilient system must be able to respond, monitor, anticipate, and learn. It’s not resilient if it lacks any of these abilities—even if it excels at some of them.

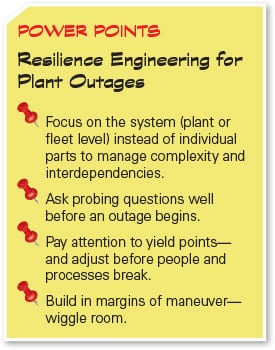

The first article covered the first two principles below; this article addresses the remaining three. To “engineer” resilient systems, we design according to these principles:

■ Principle 1: Variability and uncertainty are inherent in complex work.

■ Principle 2: Expert operators are sources of reliability.

■ Principle 3: A system view is necessary to understand and manage complex work.

■ Principle 4: It is necessary to understand “normal work.”

■ Principle 5: Focus on what we want—to create safety.

The opposite of resilient is “brittle.” Think about how a brittle material fails: Load increases and increases until, “snap,” it breaks. You have little to no warning. Hidden flaws contribute to sudden and unexpected failures.

Resilience is like a ductile material: Load increases until the metal begins to deform, at which point you remove the load and it returns to normal shape. You’ve just been warned that you are reaching the yield point; beyond the yield point, permanent deformation begins to occur, but you still have time to recover before failure.

Power plant operations, including outages, are socio-technical systems composed of people plus technology and equipment. We tend to focus on the equipment, jumping into disassembly, repair and replace, and reassembly. Yet, the other component, people, makes or breaks system resilience.

We all have limited capacity, and when we get stressed, we begin to miss and forget things. During an outage, if several significant events occur, things can begin to fail, like a snowball rolling downhill, growing and gathering speed. The more stressed people are, the more things go wrong. We’ve all seen outages like this.

To build resilience, we notice and monitor yield points to give us a chance to adapt and reconfigure so we can handle additional load without failing. Different conditions can bring us close to a yield point, such as a certain level of deployment (for example, 85% deployed is sustainable, but more than that may not be); three or more significant issues on a project; piling up stressors such as foul weather, a holiday, and change-out of people; or novel or complex work.

There are signs that indicate we are approaching a yield point. One is that people begin acting differently. We can look for signs that the mood or situation has changed: progress stalls, schedule slips, people don’t return calls or emails, or people are irritable.

What signs have you noticed when approaching a yield point? Who is in a good position to monitor for change? Sometimes when you’re sick, it’s hard to decide when to call the doctor. Similarly, people off site may be in a better position to notice changes at a big picture level while people on site are immersed in regaining control.

Principle 3: A System View Is Necessary

A system view of work simply means stepping back and looking at the big picture from a level that makes sense—a power plant, a fleet of power plants, or a set of outages—considering that components within a complex system have interdependencies. We have a natural tendency to attempt to simplify systems, focusing on individual components (X is broken, fix it!). This can cause us to miss the more nuanced influences related to system interdependencies. This is why highly reliable organizations are reluctant to simplify. Oversimplification can lead to a static instead of dynamic view. In our highly variable world, it is important to see and understand changes as they happen. A system view looks not only at trends but also at speed and rate of change (velocity and acceleration).

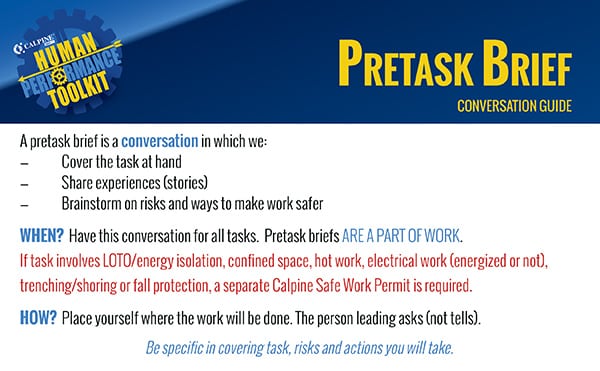

Assessing System Brittleness. David Woods, a founder of resilience engineering, said, “Ability to deal with crisis is largely dependent on what was prepared before the surprise arrives. What is not prepared becomes a complex problem and every weakness comes rushing to the forefront.” In complex systems, many interdependencies are hidden and are only revealed in a crisis, accelerating degradation of the system. A big part of being prepared is uncovering and being prepared for these interdependencies.

Drawing System Boundaries at the Power Plant Level. Taking the plant as a system boundary, we look for relationships between units. We’ve noticed a pattern with some plant trips: Just as we get one component under control, issues emerge with another component. In one case, on a combined cycle unit, the steam turbine tripped after losing feed to a bus. Just as we got the steam turbine under control, we were surprised by one of the gas turbines having a slight overspeed excursion and tripping. It turned out we lacked a tiebreaker scheme to transfer power supply between the cooling fans of each unit, so we lost power to vent fans, and then the compartment overheated, causing a gas control valve processor to fail.

If we uncover and address interdependencies before emergencies, we loosen coupling to give ourselves more time to respond. At Calpine, we are just beginning to think about how to apply this—how to look for linkages between units or unexpected connections between components in the physical, electrical, and/or control systems. At one plant, we will use simulations to improve emergency response and help find interdependencies.

Drawing System Boundaries at the Fleet Level. Outage work during spring and fall peaks requires continuous adaptation. Emerging scope is common, and resources are always constrained. This results in a system that can quickly become brittle and produce more breakdowns as resources are tapped and fatigue grows. We have a tendency to focus on outages with the most urgent issues, but this misses interdependencies and indirect costs associated with cascading changes.

For example, when people are moved to different outages, as the result of changes in plans or in the middle of an outage, risk is introduced and there can be losses due to ripple effects. These losses and risks are mostly hidden but can be uncovered and addressed with a holistic systems view (see sidebar).

Designing for Resilience: Margins of Maneuver. Being resilient means having the ability to stretch near boundaries, such as when surprise occurs or we’re overloaded to the point that we are close to or beyond our limits. This is sometimes called “graceful extensibility.”

Margins of maneuver are the cushion of potential actions and additional resources that allow us to continue to operate and enable us to maintain or regain control. You can think of margins of maneuver as wiggle room. Margin can be in the form of extra resources such as people, tools, time, or parts. Margin can be in the form of having multiple paths of action—a way out. Here are two strategies for building margins of maneuver:

■ Share resources. Think of rotational programs that support development of people’s ability to hold multiple roles. Develop joint strategies between groups to define which skill sets to recruit and develop, such as staffing support groups with former frontline personnel who can temporarily be deployed. Add a person with general skills to difficult projects to unload project leadership. Share people between plants to support outages.

■ Shed load. During peak load, people with critical skills do only work that requires those skills. They shed more general work to others. Postpone what can be done later (such as paperwork).

Margins of maneuver can show up as excess capacity when not in use. Yet, when we consider how quickly money can be made and lost with a plant down, the real value of this capacity is clear. Consider this shift from the perspective of using different language: “margins of maneuver” as contrasted with “fat in schedule.” This different framing opens opportunity for surfacing and actively managing the extra time in schedules that represents uncertainties, as compared to hiding uncertainty by artificially increasing the duration of scheduled activities.

Principle 4: Understand Normal Work

Principle 4, “It is necessary to understand normal work,” and Principle 5, “Focus on what we want to create—safety,” come from Hollnagel, who notes the disconnect of assessing how good we are at safety by measuring the absence of safety. Both good and bad outcomes result from the variability present in normal work, yet normal work goes well 99% of the time, mostly due to improvisations and adaptations of expert operators. Does “improvise, adapt, and overcome” ring a bell?

Here’s a lesson from aviation to clarify the principle of understanding normal work. When an aircraft is on final approach, margins are narrow and the aircraft is in a critical stage of flight, with few options for recovery. A project led by Maria Lundahl of LFV Air Navigations Services of Sweden, aimed at reducing runway incursions (the collision of aircraft that are landing or taking off with ground equipment, other aircraft, or people), studied “normal work” as follows.

First, experts (pilots observing pilots, air traffic controllers observing air traffic controllers [ATCs], and cart drivers observing other cart drivers) observed normal work, looking for why there were no runway incursions most of the time. Then, in workshops with pilots, ATCs, and cart drivers, they:

■ Asked participants to think about normal work (what they do well) and share examples of when they were in a situation where a runway incursion could have occurred but didn’t. “Why didn’t it?”

■ Brought in real cases of runway incursions and told the story, right up to just before the incident, and then asked, “What do you do to avoid a runway incursion in this case?”

■ Had people shadow or talk with those in other roles to shift perspectives and improve cooperation: pilots and drivers go into the ATC tower. ATCs go on carts around the airport, even during snow removal. All had good ideas for other roles, and it developed a deeper understanding that improved coordination and cooperation.

■ Vetted good practices that came up throughout the project with expert drivers, pilots, and ATCs.

Although this was a large-scale study, the same concepts apply at a plant level. Think about a situation where we almost trip the plant but recover. What are other low-margin situations where we “create safety” through normal work?

Principle 5: Focus on What We Want to Create—Safety

Applying each of the first four principles is how we enable success in applying the fifth one, which focuses on the ultimate goal—the creation of a safe work environment.

Using a Real Time Risk Assessment to Create Safety. Here’s a practice that helps Calpine manage the emergent situations that are common with complex work. It’s called “Real Time Risk Assessment.” With almost 90 power plants, we have deep knowledge and much experience to bring to bear on problems. You likely have a similar situation, but how do you locate knowledge and experience, quickly, at the point and time of need? The thing about knowledge is that it’s always evolving. We could database it, but as soon as we did, the database would be out of date.

In a Real Time Risk Assessment, a novel and/or difficult situation comes up and, within an hour, a geographically separated group of people, diverse in terms of knowledge and function, convene via conference call to solve the problem.

It works like this. Plant leadership contacts the knowledge broker/facilitator. In our case, it’s the safety team. The safety team bridges organizational boundaries, and members have been trained to facilitate this process. The knowledge broker sends a request for help to “matchmakers” (people who know what others know) who have wide networks and deep or broad work with plant leadership to determine who to invite, filling these roles: risk decision owner, challenger, subject matter experts, and peers with similar experiences.

There are typically 10 to 15 people on the call. The knowledge broker leads the call, translating the conversation into the language of risk, actively probing and bounding risks and uncertainty. On the call, we describe and diagnose the risk situation via structured brainstorming designed around questions that surface risks. Then we agree on and produce a plan of action that includes any decisions to be made, monitoring, and hold points.

An After Action Review is held once the work is complete. Lessons are shared with risk assessment participants, deepening the circle of knowledge.

To implement this on a smaller scale, consider engaging peers from user groups, trusted external partners, similar noncompeting generators, vendors, and OEMs to fill the roles.

A Real-World Example. We held a Real Time Risk Assessment prior to temporarily repairing a hot reheat bypass line weld crack. It came up, during the risk assessment, that the repair vendor hadn’t realized how much the plant was cycling. The vendor went back and reinforced the strong back. “Aha moments” are common during these risk assessments. Additionally, a plant manager and lead mechanic from another plant, where a similar repair had gone awry, shared their experiences and tips.

The repair went well but ran a little long, and we ran into issues with Amazon having reserved all cargo space on planes just prior to Christmas. These lessons, and others, were rolled back out to risk assessment participants, increasing the odds that the next time we do this type of repair or emergent work near the holidays, we’ll be even better prepared.

Beginning the Journey

Resilience engineering is a shift in perspective—shifting focus to the future and to systems—and focuses on how people really work, rather than on the idealized version of work. The practices in this article support making this shift. There’s no need for grand announcements. You can use the basic principles described in this article to guide decisions and design. We develop resilience through small changes, small experiments, and new questions with consistent, continuous focus on learning. To paraphrase Urban Meyer in Above the Line, the day we stop learning, we are falling behind. ■

—Beth Lay (Beth.Lay@Calpine.com) is director human performance at Calpine Corp.