Sample and analysis panels are on the front lines of the constant battle to detect power plant water chemistry problems. However, at many plants, operators have low confidence in the reliability of on-line analyzers, so they “default” to wet tests alone to monitor and control steam cycle chemistry. That’s risky. Relying exclusively on wet tests significantly reduces the number and type of water chemistry problems that can be detected and solved. That, in turn, puts plant equipment in danger. Just because you can’t see damage to steam generator tubes doesn’t mean there isn’t a problem.

The best intentions

Many new plants start off on the right foot by establishing maintenance schedules for sample panels and training operators in their use and maintenance procedures. But often, these schedules are abandoned early in commercial operation because keeping air-quality analyzers and instrumentation and control (I&C) systems on-line is given a higher priority, or perhaps because operators’ experience with early-model analyzers was poor. Facing problems elsewhere in the plant, I&C techs sometimes put off maintaining sample panels that they are unfamiliar with and whose problems they haven’t been trained to fix. (See this month’s Focus on O&M story, “Solving common analyzer problems,”) Regardless of why these instruments don’t get the respect they deserve, it may be time for a short refresher course in why on-line analyzers are critical to reliable plant operations and why good maintenance practices might just restore your trust in them.

Ignoring your analyzers usually reduces the efficiency of steam cycles and limits the effectiveness of the panels themselves. The negative effects are most pronounced at cycling plants, where it’s hard to keep on-line analyzers operating accurately and reliably. Starts and stops of sample flow allow the probes to dry out and rewet, increasing their wear and shortening their useful life. In addition, iron and other contaminants liberated during cycling can clog or foul sample lines, pressure-reducing valves, rotometers, and probes. Unfortunately, most sample panel maintenance tasks can be performed only when the plant is operating and producing sample flows. And it’s hard to develop trust in an instrument that is not properly maintained.

For these reasons, water-quality analyzers are often abandoned or ignored while sample panels are relegated to obtaining only grab samples. The readings they produce may still be entered into the plant’s distributed control system (DCS), but if alarms aren’t active, the readings are ignored.

One way to illustrate the importance of sample panels to water chemistry analysis is to review two case studies where perceived monitoring problems resulted in a forced outage or steam cycle damage. In both cases, the downtime and damage could have been eliminated or minimized if the sample panel had been functional or the readings trusted.

Case study # 1: “Those new analyzers won’t work.”

The following series of incidents occurred over several months at a new 3 x 1 combined-cycle cogeneration plant in a southern state. After its initial start-up, the plant routinely performed wet chemistry tests but did not maintain its sample panels. Several water chemistry upsets occurred during the first several months of operation, including both low- and high-pH events. Data from the plant’s new on-line analyzers (which operators didn’t trust) clearly showed all of both kinds of events, but many of them were not detected by the wet tests. None of the sample panel analyzers was alarmed to the plant’s DCS.

The root cause of the events, which were relatively short in duration, was diagnosed as instrument condensate contamination. Operators got into the lazy habit of feeding caustic when drum pH was low and securing chemical feed and blowing down when the pH was high. They had several opportunities to use the new on-line analyzers to detect problems, but because the instruments were poorly maintained and untrusted, their readings were disregarded. The lack of functional alarms contributed to the lack of situational awareness—only two of the six on-line pH analyzers were in service.

The six pH analyzers monitored the high-pressure (HP) and intermediate-pressure (IP) drums of the plant’s three heat-recovery steam generators (HRSGs). The two operating analyzers clearly showed severe pH depressions or elevations as host condensate quality varied. Because the plant was relying only on wet test data, operators neither saw these excursions nor appreciated their significance.

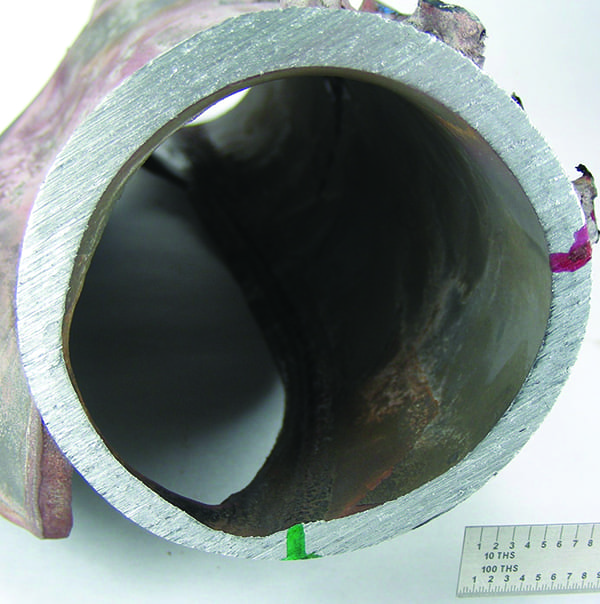

As you’d expect, the tubes of this plant’s HRSGs began to overheat and fail after only six months of operation. But the tube failures were only the first signs of a serious water chemistry problem. Another was the formation of iron deposits in the lower bends of the tubes (Figure 1), detected by an initial inspection. This particular plant is especially vulnerable to iron deposition because it uses high levels of duct firing on its HRSGs, which increases the heat flux across their tubes. Subsequent inspections also showed significant material loss throughout the tubes (Figure 2), not just at bends.

1. Ironed out. Iron deposition in HRSG tubes reduces the tubes’ life expectancy as well as unit generating capacity. Courtesy: Nalco

2. Going deep. Abnormal loss of material from an HRSG tube, detected by a boroscope inspection. Courtesy: Nalco

Because four on-line analyzers were out of service and the other two were not trusted by the operators, the plant addressed the problem’s symptoms, rather than its root cause. It did so by chemically cleaning the HRSG tube sections that had remained unaffected and replacing those with holes or heavy deposits.

Those repairs cost millions of dollars, and during the outages needed to make repairs, the plant lost millions more in production revenue. Equipment life was considerably shortened because the analyzers didn’t perform due to slack O&M training and procedures plus poor alarm management—not because “those new analyzers didn’t work.”

Case study # 2: “Don’t believe those readings.”

The following series of incidents occurred at a two-year-old 3 x 1 combined-cycle merchant plant in a western state. One day, the plant’s steam turbine tripped as HRSG #2 was being started up. The plant recovered from the trip and HRSG #2 resumed starting up. Wet chemistry tests (performed in the plant’s lab on schedule, shortly after recovery from the trip) indicated a low pH (about 7.5) in the HP drums of HRSGs #1 and #3.

Like many plants, this one assigns water chemistry duties to junior operators. The junior operator in the chemistry lab, who had never seen drum pH this low, didn’t believe his eyes. He did what any good junior operator would do—he called a senior operator to ask about the low readings.

Because HRSG #2 was in start-up mode, it took the senior operator over an hour to get to the lab. He and the junior operator checked the drum pH readings again, and they were even lower than before, at about 5.5.

Seeing isn’t believing. Both operators were certain that the bench-top pH analyzer producing the readings had failed because they assumed that the plant’s on-line pH analyzers weren’t working. But after they calibrated the bench-top analyzer, it generated an even-lower pH reading—4.5. Still sure that the lab analyzer was the culprit, the operators next replaced its probe and calibrated it again. Still no luck: The instrument continued to indicate a very low drum pH. Frustrated, the operators looked around the lab for another pH analyzer to use but couldn’t find one.

By this point, the bench analyzer indicated that the drum pH readings of HRSGs #1 and #3 had stabilized at around 4.0, but the operators remained certain that these were “bad” readings. An additional factor added to their confusion: The same analyzer appeared to produce “good” readings from other grab samples. The operators next tested the pH of the plant’s feedwater, condensate, and demin water, and those results were well within the normal range of values. By then, HRSG #2 had completed start-up and was operating at low load.

Finally, both operators contacted the control room supervisor to ask if another bench-top pH analyzer was available anywhere. Two hours after he relayed the request to the plant’s chemist and operations supervisor and got “no” for an answer, the control room supervisor shut down the plant.

All told, the two operators wasted about six hours believing that they needed another bench-top pH analyzer. In fact, the one they were using had been working properly the entire time. It took another two hours to begin shutting down the plant, a process that required the plant’s chemist, operations supervisor, and manager to concur that the low pH event was, in fact, real. A subsequent examination of on-line analyzer data showed that the pH in the drums of HRSGs #1 and #3 had indeed fallen quickly to around 4.0 and remained there for the duration of the incident.

Wasting away. Though this plant’s forced outage could not have been prevented, the resulting HRSG corrosion could have been. One tube section from the HP drum of HRSG #1 was removed six months after the incident and tested. A deposit weight density (DWD) analysis indicated 16 grams/ft2 of buildup after only one year of commercial service. Such a level is more consistent with seven to 10 years of operation. As in the first case study, significant iron deposition occurred as a result of this event, so the HRSG will require chemical cleaning sooner rather than later. The full extent of the damage is still unknown. Hydrogen damage also certainly took place, but the plant has not yet seen HRSG tube failures as a consequence.

Low-pH events can cause several problems, including corrosion fatigue, hydrogen damage, and deposition of corrosion products. We can’t say that a tube failure initiated by low pH will occur within a month, a year, or even within 10 years. We can only conclude that one or more tubes will fail sooner than they would have had the event not occurred. The adverse impact of the event is roughly proportional to its duration. Based on data recorded by the one working on-line pH analyzer at this plant, the plant operated with low pH in the drums of two of its three HRSGs for six to eight hours.

The low-pH event was initiated when material in the HRSGs’ condensers came loose and struck tubes, damaging them and causing them to leak. Among the factors that made the leak(s) more difficult to detect were the lack of reliable on-line pH indication (a sample panel maintenance and design issue), the absence of specific or cation conductivity measurements upstream of chemical feeds (a sample panel design issue), and reliance on wet tests alone to identify water chemistry problems.

Wrong number. Like the plant described in the first case study, this one had on-line pH analyzers installed on all three of its HRSG drums. But in this case, only one analyzer was working, and operators’ lack of confidence in the instruments led them to believe that wet chemistry analysis was the only way to detect pH excursions. Plant management knew about the analyzers’ reliability problem but had failed to address it after one year of commercial operation.

Although the plant also was equipped with an on-line specific conductivity analyzer, its poor placement made it useless for accurate leak detection: The flow sampled by the analyzer is downstream of the point where boiler feedwater chemicals (a passivator and an amine) are added. Both chemicals increase condensate conductivity in direct proportion to the amount added. Accordingly, changes in condensate flow rate will cause a change in condensate conductivity even if the chemical feed rate remains constant. Because of this inherent variability, small condenser leaks may be masked by the noise of variable condensate conductivity.

As this example proves, even small leaks can quickly cause a low pH upset in HP HRSG drums. Plant personnel were confused because condensate conductivity appeared to be normal.

A cation conductivity analyzer would have been immune to the interference caused by the chemical feed and would have accelerated detection of condensate contamination. It should go without saying that the specific conductivity analyzer should have been installed where it would receive samples of condensate prior to any chemical addition. Had that been the case, the variability in condensate conductivity caused by the chemical feed would not have been a problem. With this variability removed, it becomes easier to detect small increases in conductivity that are indicative of an incipient condenser tube leak.

Once again, poor plant O&M practices meant key on-line analyzers were unavailable during start-up, and readings from the one operating analyzer were distrusted. Also, senior techs seemed to be instilling poor O&M habits in younger techs, which made the problem an institutional one. Apparently, the plant manager didn’t believe in investing in the immediate repair of these instruments, thereby reinforcing the mistrust. What this plant has is a leadership problem, not an analyzer problem.

Squared-away sample panels

In these two cases involving either rare upsets in condensate quality or condenser tube leaks, a technician’s mistrust of an instrument—due to prior reliability problems or poor O&M practices and training—caused additional damage to plant equipment especially during chemistry upsets during cycling operation. Such upsets are the result of overfeeding or underfeeding treatment chemicals just prior to unit shutdown or immediately after start-up—conditions that on-line monitoring would detect.

It’s always more difficult to control the chemistry of a cycled plant, but ignoring or not maintaining your on-line analyzers just exacerbates the problem. Chemical feed systems may fail during the shutdown period. Pumps may become air-bound or otherwise cease to function. If on-line analyzers are missing, not working because repairs aren’t a priority, or not trusted, the chemistry upsets caused by these failures may not be detected until the first grab samples of the cycle are taken. For this reason, many plants operate with no chemical feed (or too much chemical feed) for the first several hours following start-up.

Time is money

Many plants perform wet tests that do little to protect equipment. If a plant’s sample panel is reliable, wet tests need to be performed only to verify the continued accuracy of the on-line analyzers. In the absence of reliable analyzers, wet tests become the first line of defense.

Because wet tests should be performed every four to six hours, operators who depend on them spend a lot of their time monitoring water chemistry, during which other problems go undetected (causing more upsets). Lowering chemistry’s priority on the task list is no solution, because doing so produces the same result.

At most plants, sample panel maintenance is very time-intensive and entails weekly or biweekly calibration of on-line analyzers by the maintenance department. However, if more than one instrument shows some drift after it is returned to service, it’s not uncommon for operators to believe that all of the analyzers need to be recalibrated or have their probes replaced. They then write work orders that maintenance fills, which starts the cycle again. In the end, maintenance spends as much time “fixing” working analyzers as operators do on chemistry.

Improved maintenance, operating, and design practices will solve many problems that are unjustly blamed on analyzers. Here are some suggestions that will keep your sample panel running in tip-top condition.

Delivering accuracy and reliability

Operators, because they are the primary users of the plant’s sample panel (Figure 3), are best qualified to determine if it needs maintenance. Taking the following steps ensures that on-line analyzers read reliably and are repaired when they don’t.

3. Water works. A typical sample panel with separate dry and wet sections. Courtesy: Nalco

Ensure analyzer accuracy and standardization. As with any sampling system, some assumptions must be made regarding the conditions that must be met to ensure accuracy and reliability. For pH instruments, the sample conditioning equipment (especially the temperature-controlling unit) must maintain a constant sample temperature that meets the manufacturer’s specs. In addition, wet tests used to verify on-line analyzer accuracy must be performed using temperature-compensated probes. Because sample temperature has a large impact on pH readings, any deviation in temperature will produce a deviation in pH that is not due to calibration or instrument error.

The plant’s chemistry trending software should be modified to add a set of calculations called “analyzer deviations.” These algorithms operate on the differences between the results of wet test samples and those of the on-line analyzers. Typical pH probe/analyzer combinations are accurate to about 0.1 pH unit. That being the case, some deviation between analyzers (on-line or bench-top) should be expected. An analyzer should be considered accurate as long as the deviation is within expected limits.

The analyzer deviation calculations also help determine the need for analyzer calibration or replacement. Each deviation calculation represents a ratio of a wet test reading divided by an on-line analyzer reading. For example, the deviation would be 1.00 if the wet test and sample panel readings were identical. For pH analyzers, the control limits are 0.95 to 1.05 (5% deviation). No standardization is required if the wet test result and on-line analyzer pH readings are within 0.2 pH unit of each other, or if the calculated deviation is between 0.95 and 1.05. For conductivity, the limits are 0.90 to 1.10 (10% deviation).

Operators should take responsibility for this important calibration check, which should be performed at least weekly. Operators should be trained to standardize any analyzer whose calculated deviation exceeds the limit specified for it.

Standardization is relatively quick and easy. Operators don’t actually calibrate the analyzer—they offset its current reading by the amount of deviation determined by a wet test/on-line analyzer comparison. The procedure can be hard-coded into most chemistry monitoring software. Most chemistry trending programs can be configured to display an alarm with a link to the standardization procedure if a deviation is greater than the limit.

Flag out-of-service analyzers and equipment. Frequent high deviations or standardization failures may mean that an on-line analyzer needs to be replaced. If either is the case, operators should describe the problem on the work order so maintenance staff will examine the analyzer in detail.

If an operator generates a work order for an on-line analyzer, he or she should indicate having done so on a white board in the water chemistry lab (or use some other method) to flag that it is out of service. Operators typically mark the analyzer’s reading on the shift log sheet as “OOC” (out of commission) or “OOS” (out of service) so operations managers can see at a glance what’s not working. It’s absolutely critical that operators be trained to believe an analyzer’s readings unless it is flagged as OOC or OOS. There should be no second-guessing.

If a reading from a working analyzer is out of range, operators should take immediate action: a single retest, but no more. Two independent readings (from an on-line analyzer and a wet test) should be used to confirm that the parameter is out of range and that corrective actions should be taken.

For an out-of-service analyzer, operators must increase the frequency of wet tests for any reading that it supplies. For example, if the pH analyzer for an HRSG’s HP drum is out of service, operators should increase the frequency of wet testing that drum’s pH to once every four hours. Similarly, if a silica analyzer is out of service, operators must wet-test all of the systems sampled by it once every four hours. This approach accomplishes two goals. First, it provides increased protection if an analyzer is out of service. Second, it creates some urgency on the part of the operators to get the analyzer back up and running (since their workload increases when the analyzer is out of service).

At most plants, shifting primary responsibility for sample panel maintenance to the operator decreases overall maintenance costs. Under such a regime, analyzers are calibrated on an as-needed basis rather than every week, typically saving about four I&C man-hours per week. The frequency with which pH and ORP (oxidation-reduction potential) probes are replaced decreases from about once every six months to about once a year. Another benefit of this approach is that conductivity probes no longer need to be replaced as a step in the preventive maintenance program. Replacing the probes only if they fail will generate net savings averaging about $18,000 per year per site. Sites that do not maintain their sample panel will obviously see their overall maintenance costs rise after implementing this philosophy. But usually the increase is more than offset by the higher unit availability made possible by a reliable and accurate steam sample panel.

4. Settle down. On-line analyzer deviations before and after a change in sample panel maintenance strategy. Source: Nalco

Figure 4 confirms the tangible positive effects of changing a sample panel maintenance strategy. The graph shows how one plant’s average analyzer deviation ratio, discussed earlier, fell precipitously with a shift in maintenance philosophy. The correlation between wet test results and sample panel readings was extremely low before the change but much higher after it. The improvement in the accuracy and reliability of on-line analyzers allowed operators to decrease their wet testing frequency to once per day. Figure 5 shows typical, much lower analyzer deviations several weeks after the change.

5. Great expectations. Typical analyzer performance after the maintenance strategy change shows only minor deviations in readings. Source: Nalco

Make alarms active in the DCS. Critical parameters should be alarmed on the plant’s DCS, and these alarms should be received in the control room. EPRI provides specific recommendations for DCS alarms in the publication, “Cycle Chemistry Guidelines for Fossil Plants.” Nalco’s recommendations for the critical alarm parameters include:

- All pH readings

- All specific conductivities

- All cation conductivities

- All sodium analyzers

- All silica analyzers

- All dissolved oxygen analyzers

In addition to DCS alarms, most plants have access to chemistry-trending software, the plant data historian, or both. These data should be examined often to verify that steam cycle chemistry is within required limits. Operators should review the last 24 hours of sample panel trend data (at a minimum), just as they review DCS data for the turbine. In many cases, the trend data can be used to detect changes or upsets even if the analyzer providing the reading is in need of repair or maintenance.

Clean and preserve during downtime. As mentioned earlier, cycling operation makes it extremely difficult for on-line analyzers to operate accurately and reliably. The intermittent sample flow allows analyzer probes to dry out and rewet, increasing wear on them and shortening their life. These problems can be minimized if probes are cleaned with demineralized water and if demin water or condensate is routed through idle sample points.

If its probes are coated with corrosion products, an on-line analyzer will be sluggish and inaccurate. Taking advantage of a cycled plant’s downtime to clean and preserve probes can increase analyzers’ accuracy and extend probe life after operation resumes. Idle probes should be removed at least monthly and cleaned with demin water and a lint-free cloth. After flushing the sample “T” with demin water, refill the probe header, and re-insert the probe. Remember to restore the flow of demin water to idle sample points after the probe has been cleaned.

Most sample panels have several different locations where the demin water tie-in could be made. One good location is on the downstream (cool) side of the sample coolers. There’s usually an existing union on all of these sample points that could be used to introduce demin water. This retrofit would require the installation of a block valve on the cooler effluent, to prevent both the backflow of demineralized water through the sample lines and the simultaneous flow of normal sample and demin water through the sample panel. A quick-disconnect and “T” can be installed downstream of this new block valve. Demin water would then enter the idle sample point through the quick-disconnect and flow through the sample point via the “T.” Figure 6 shows one possible arrangement for each sample point.

6. Keep it clean. A simplified schematic of a demin water flushing system for a sample panel. Source: Nalco

This arrangement provides several advantages. First, it preserves analyzers by maintaining flow through them even when the plant (or an HRSG) is shut down. Second, it minimizes sample line fouling because the continuous demin water flow during shutdown will tend to flush corrosion products that accumulate in the sample lines during operation. Third, it allows sample panel maintenance to be performed regardless of plant status.

Plants that have made this retrofit have reaped substantial benefits. But before you follow suit, here are two caveats. First, you must devise a way to distinguish whether a sample point is receiving demin water or a normal sample. One plant did so by creating engraved nameplates with “Demin water” on one side and “Sample” on the other. The plaques hang on a chain on the front of the panel around the block valve for each sample point. Operators simply turn the plaque around to read the sample point’s status.

The second caveat is to take care to ensure that demin water and normal samples are not fed to the same sample point at the same time. Mixing the two creates several potential problems and should be avoided. Sample point shutdown and start-up procedures can be modified (or created) to address this potential problem.

—Dan Sampson (dcsampson@nalco.com) is a power industry technical consultant for Nalco Co. He authored “Fleetwide standardization of steam cycle chemistry” in the March 2006 issue of POWER.