The data center industry is having a power problem. The problem is at the rack. Artificial intelligence (AI) is driving rack power into ranges where conversion losses are no longer background noise. Every piece of equipment in a data center rack—graphics processing units (GPUs), central processing units (CPUs), storage—runs on direct-current (DC) power. Most facilities still distribute high-voltage alternating current (AC) to the rack and convert it to DC at the point of use. At AI-scale densities, that’s an inefficiency the industry can no longer afford to ignore.

An Inconvenient Truth

GPU-intensive environments are calling for rack power densities as high as 300 kW. Converting AC to DC at the rack results in energy loss, cost, and complexity that compound as densities rise.

As the data center industry scales to massive capacities, the question of how to distribute power more effectively centers on reliability and cost savings. Distributing power at higher voltages (such as 600 VDC) would increase reliability while reducing the copper costs associated with lower-voltage AC distribution.

The reason high-voltage AC is used so widely is that it has cost benefits during transmission. But when the power gets to the rack and has to undergo conversion, that’s when those benefits disappear. The process wastes energy and generates heat that must be removed. At AI-era densities, those losses become measurable, and they show up twice: in electrical waste and in cooling overhead.

Google recognized this inefficiency and took action. They removed standard power supplies entirely and developed proprietary technology to distribute lower-voltage DC power (such as 48 V) at the rack level. The takeaway is not that this model is universally portable, but that DC-oriented rack designs can operate reliably when an operator can control hardware specifications, warranties, and service processes.

It is also important to define what “DC” means in practice. Some approaches distribute 48 VDC within the rack, others use higher-voltage DC to reduce current, and many pursue hybrid designs that centralize rectification and minimize conversion steps near the load. The common objective is fewer conversions at the rack and less heat generated at the highest density.

What the Losses Look Like

At lower rack densities, conversion losses are easy to dismiss because they are obscured by larger facility-level variables. At high rack power, a small percentage loss becomes meaningful. For example, at 100 kW, a 4% conversion loss is 4 kW of heat. With consistent operation across conductors and other power-related components, all these losses are converted to heat. So, a 4-kW conversion loss actually becomes a 4-kW heat load.

The Manufacturer Barrier

Even though DC can be delivered, the manufacturers’ equipment and the loads they produce constrain adoption. Most server manufacturers ship products with built-in AC power supplies. Removing or bypassing the standard power supply would be a violation of existing sales catalogs and manufacturer warranties. The standard becomes a structural barrier to DC adoption.

Distribution mechanisms, switchgear, circuit breakers, and protection devices are all designed around AC standards. A DC distribution network would require custom-built infrastructure, including specialized DC breakers and various protection systems. This means rethinking power delivery from the substation to the rack, especially if the goal is facility-wide DC, because today’s supply chain, field service model, and commissioning practices are optimized for AC.

For an industry-wide conversion to occur, customers would have to push for it. Customers would have to demand that their loads be distributed in DC. If that were to happen, then the entire architecture, from original equipment manufacturers (OEMs) to supporting component suppliers, would have to adapt. However, currently, the need is for speed to market. And this time, the drive almost always overrules the push for architectural innovation.

In this dash to deploy AI infrastructure, a six-month delay to implement a custom DC architecture is not enticing. The need to deliver the product on time takes precedence over the theoretical benefits of a redesign for DC. It’s a conundrum. Manufacturers won’t produce DC equipment unless their customers demand it, but customers aren’t demanding it because the supply chain isn’t ready to support it. In practice, the constraint is rarely “Can DC work?”, it’s “Can we buy it, warranty it, service it, and deploy it on schedule?”

Misconceptions and Hidden Opportunities

DC has a reputation for being more dangerous because it does not alternate; a steady current can deliver a more detrimental shock because it is continuous. This concern is behind the reluctance to consider DC, even though proper engineering and protection systems can manage these risks.

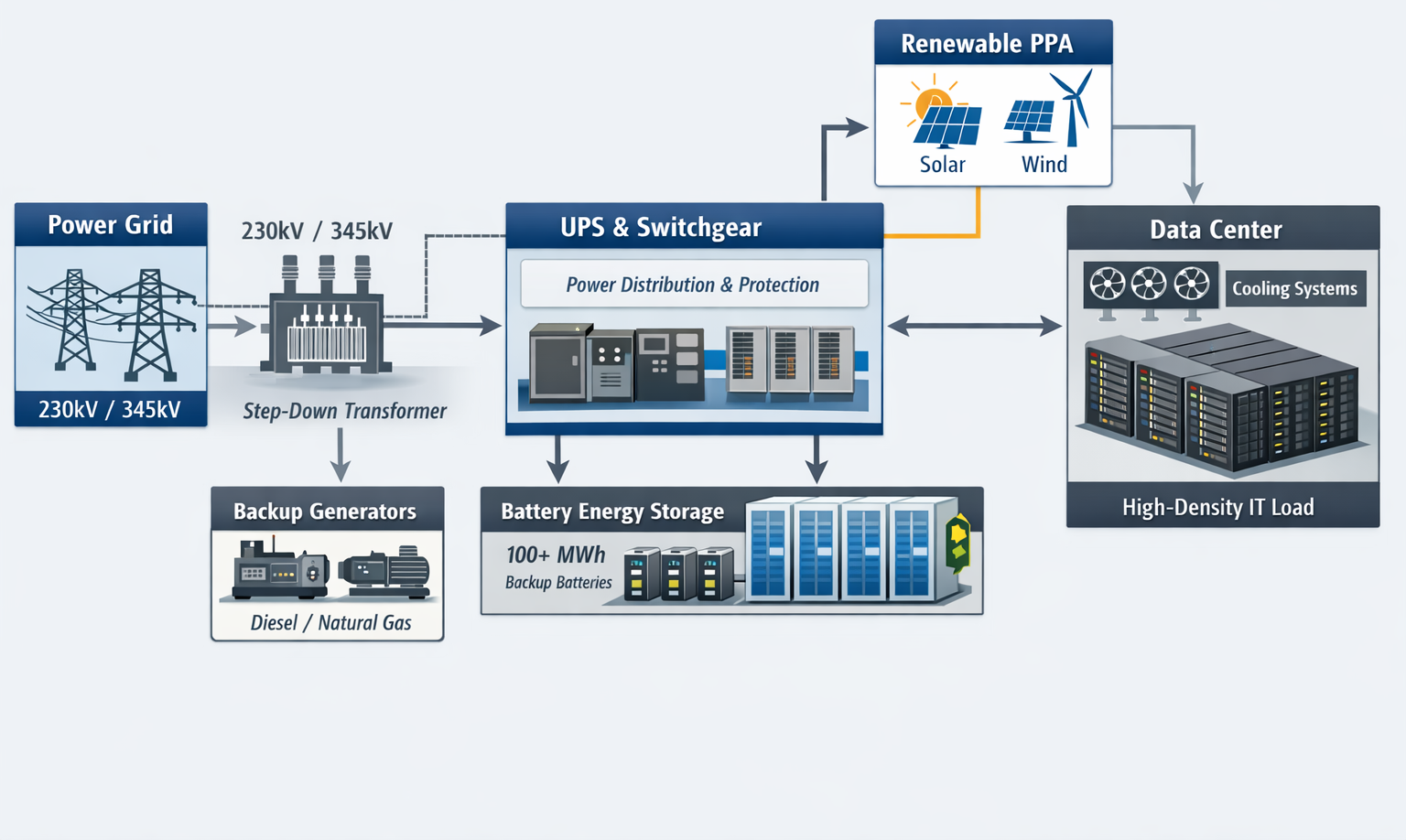

However, DC power is already in use in data centers. It is in the battery banks of uninterruptible power supplies (UPS). The infrastructure to handle high-voltage DC safely already exists in every facility (Figure 1). The missing piece is not the technology but the willingness to extend DC distribution beyond the UPS to the rack in an interoperable, standards-aligned way that OEMs will support.

A data center with DC architecture could improve power delivery and reduce costs by reducing conversion stages, simplifying the power path, centralizing rectification for easier monitoring and servicing, and reducing rack-level thermal burden caused by conversion losses. The simplification of the power path alone would reduce both capital expenditure and ongoing maintenance costs.

It’s important to note that DC is not a universal win. At modest rack densities, in mixed-use environments, or where standardized OEM AC configurations are non-negotiable, the operational friction can outweigh the efficiency upside. That’s why DC is showing the clearest value today in highly standardized, high-scale deployments that can control more of the stack.

The Inflection Point

At some point in the not-too-distant future, this glaring reality of power system inefficiency will force a decision. When power supply manufacturers and OEMs recognize this inefficiency, and customers demand products that address the compounding cost of rack-level conversion at AI densities, the market will react. As rack power climbs, conversion overhead becomes a visible line item in operating budgets and a constraint in thermal design. The engineering case for reducing conversion stages is credible, but adoption is gated by ecosystem readiness: standards, warranties, protection devices, interoperability, commissioning practices, and speed-to-market execution.

The challenge is clear: to patch an inefficient architecture or commit to a fundamental redesign. Teams will face a practical choice: continue scaling an AC-dominant architecture and accept rack-level conversion overhead, or pursue DC-oriented designs in defined, supportable blocks where hardware, service, and protection models are aligned. DC power at the rack is a proven approach. What remains unproven at industry scale is not the engineering, but the standardization required to make it routine.

—Scotty Embley is an associate at hi-tequity, where he supports business development, sales operations, and client engagement. His work includes market research, opportunity development, and sales cycle coordination.