Why megawatts, siting, firm generation, and power-aware design are becoming the real inner loop of the artificial intelligence (AI) race.

“We are knocking on the door of these incredible capabilities. The ability to build basically machines out of sand.” Dario Amodei, CEO of Anthropic, used that phrase at Davos this January to describe how silicon is being turned into intelligence at unprecedented scale. It is a memorable line, but for the power sector, the more important question is what comes next. Machines made out of sand still need a physical substrate to run. That substrate is not just chips, fiber, or cooling. It is power.

At the same World Economic Forum, Jensen Huang, CEO of NVIDIA, described the current moment as the largest infrastructure buildout in human history, with compute factories, chip factories, and AI factories rising at once. When asked about the competitive balance between the United States and China, Huang’s answer was blunt: the United States may lead in models and chips, but China is spinning up power generation faster. If energy is the core of the inner loop, he suggested, that advantage matters.

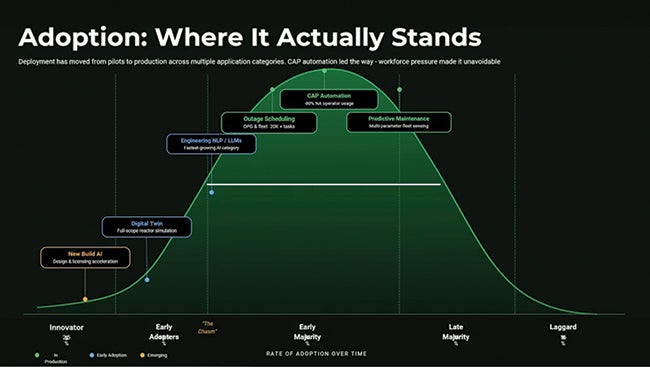

For the generation sector, this is the real story. AI demand is no longer just a technology story. It is a load-growth, siting, interconnection, generation, and reliability story. And it is moving fast enough that utilities, grid operators, data center developers, and regulators are being forced to rethink assumptions that held for years.

Power Availability Has Become the Binding Constraint

For much of the last decade, the data center conversation centered on processors, accelerators, networking, and latency. Those factors still matter, but they are no longer the first gating issue on many large projects. The new first question is simpler: Where can we get enough reliable power, how fast, and under what operating conditions?

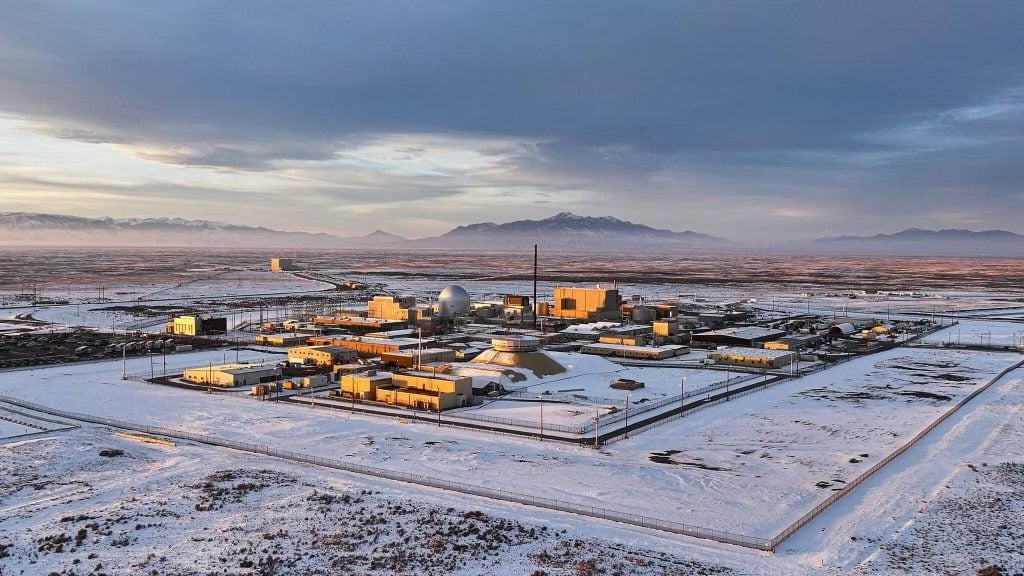

That shift is changing who gets to participate in the next phase of AI infrastructure growth. Regions with access to firm generation, transmission headroom, water, and a credible path to interconnection are gaining strategic importance. Regions without those things may still attract interest, but interest alone does not energize a campus.

This is why the AI buildout increasingly looks less like a cloud scaling story and more like a power development story. Generation timelines, substation capacity, transmission upgrades, permitting, fuel arrangements, and resilience strategies now shape the deployment schedule at least as much as server procurement does.

What That Means for Site Selection

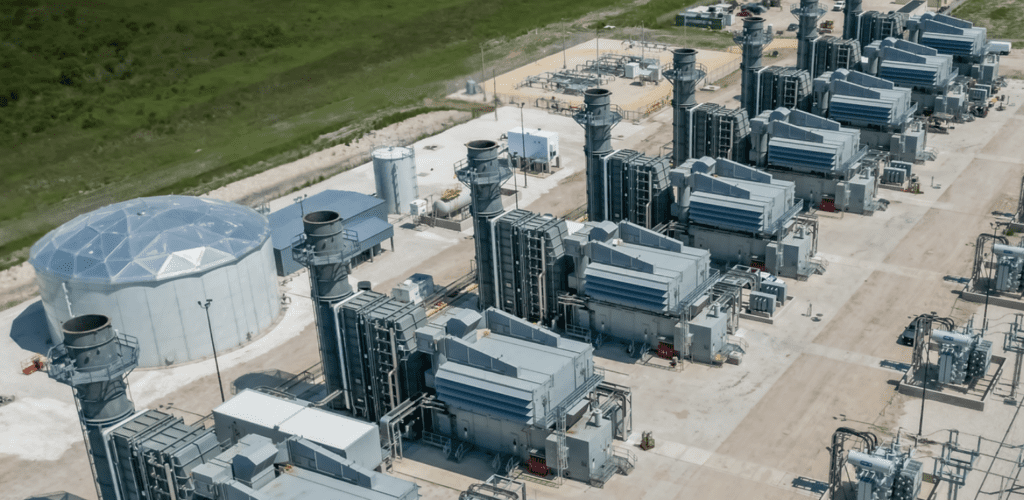

Historically, large data centers clustered around fiber routes, major metros, and established technology hubs. Today, developers are often working backwards from power. They are identifying where firm megawatts can actually be secured, then designing the land, network, and campus plan around those constraints.

That power-first logic is why interest has surged in regions with access to natural gas, nuclear, hydropower, or hybrid generation portfolios. It is also why behind-the-meter generation, on-site storage, and microgrids have moved from edge cases to mainstream planning conversations. For many projects, the choice is no longer between grid power and self-supply. It is how to combine both in a way that delivers speed, reliability, and an acceptable emissions trajectory.

The implication for utilities is significant. Economic development tied to AI loads now depends not just on having attractive rates or available land, but on being able to offer credible, staged pathways to capacity. For developers, speed-to-power has become as important as speed-to-market.

“We must distinguish between a grid failure and a paradigm shift,” Pooya Kabiri, CEO of METIS Power, said. “The existing transmission system is operating as designed. The paradigm shift is the unplanned arrival of AI-loads, which demand an order-of-magnitude more power with far stricter reliability parameters than the grid was architected to provide.

“Microgrids represent a strategic, distributed response. By co-locating high-efficiency gas generation, renewables, and storage, they create tailored power ecosystems that meet the exacting standards of modern data centers. This is not a replacement for the macro-grid, but a necessary and immediate layer of resilience that buys us time for the large-scale transmission and next-gen nuclear investments to mature,” he said.

Grid Coordination Is Becoming Strategic Infrastructure

Recent market developments reinforce the point. On April 1, 2026, the Southwest Power Pool (SPP) expanded into the Western Interconnection, becoming the first regional transmission organization with services spanning two interconnections. SPP now covers a 732,000-square-mile footprint across 17 states and 20 million people. The expansion brought in utilities including Basin Electric, Colorado Springs Utilities, Platte River Power Authority, Tri-State Generation and Transmission, and multiple Western Area Power Administration regions. For AI-related development, milestones like this are more than regulatory or market-design news. They are site-selection signals.

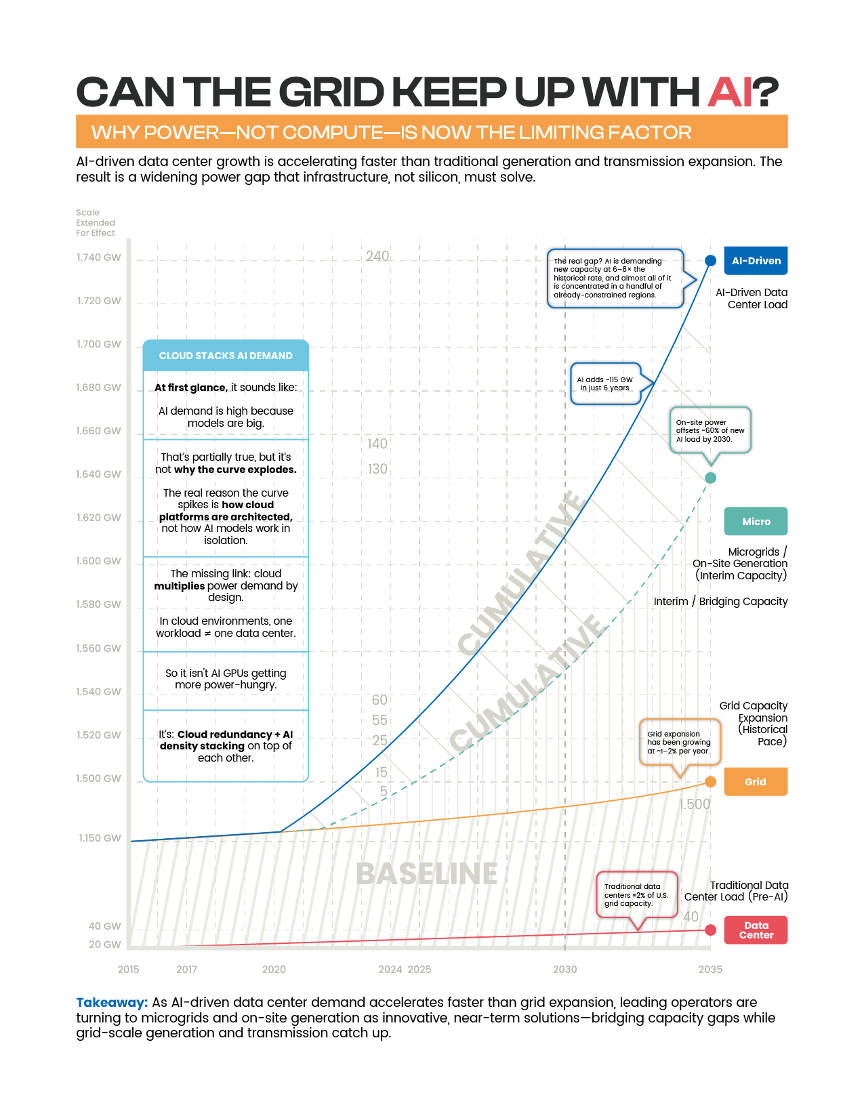

A broader organized market can improve access to dispatchable resources, support reliability planning, and give large-load customers more optionality. It does not eliminate the hard work of building generation or transmission, but it can improve how available resources are coordinated. In an environment where megawatts are scarce and queue timelines matter, that coordination becomes valuable infrastructure in its own right (Figure 1).

For utilities and public power entities, this also raises a strategic question: how quickly can market structures, planning models, and procurement approaches evolve to accommodate large new loads without shifting undue risk to existing customers? That question will become more urgent as AI demand intensifies.

Cloud Architecture Has Physical Consequences

One reason the power challenge is easy to underestimate is that cloud demand can appear abstract until it shows up in interconnection studies. But highly available cloud architecture has physical consequences. Active-active regions, multi-zone failover, geo-redundant storage, and inference replication can multiply the real-world power footprint of what looks like a single logical workload.

From the perspective of a utility or grid planner, this means one enterprise deployment can translate into simultaneous demand growth across multiple facilities and regions. Traditional forecasting methods were not built for that kind of fast-moving, software-mediated load expansion. As a result, planners increasingly need better visibility into the architecture assumptions behind AI deployments, not just the headline load number attached to a project.

This is not an argument against redundancy. It is an argument for recognizing that redundancy has an energy cost, and that the cost becomes material at hyperscale.

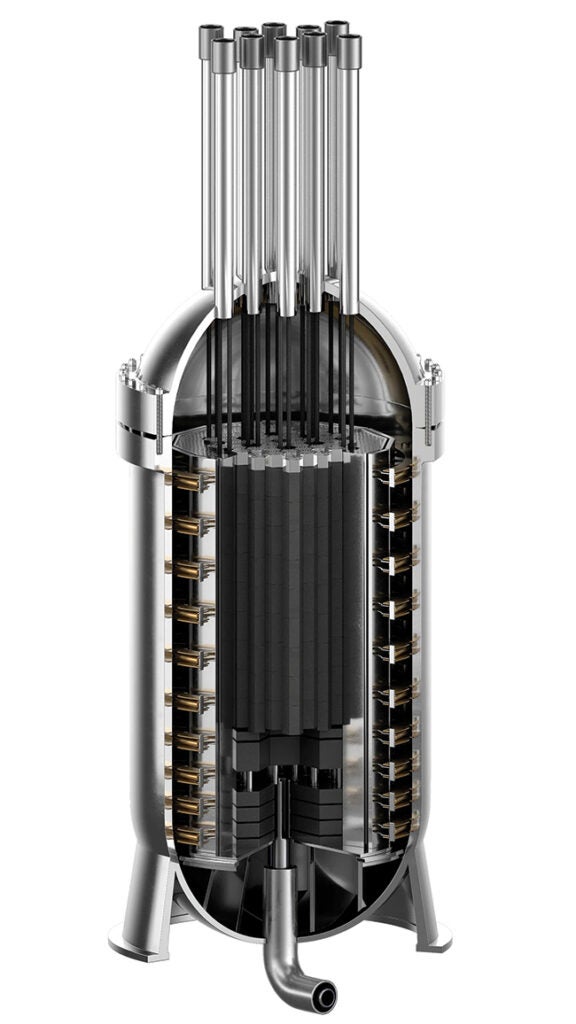

Firm Power Is Back at the Center of the Conversation

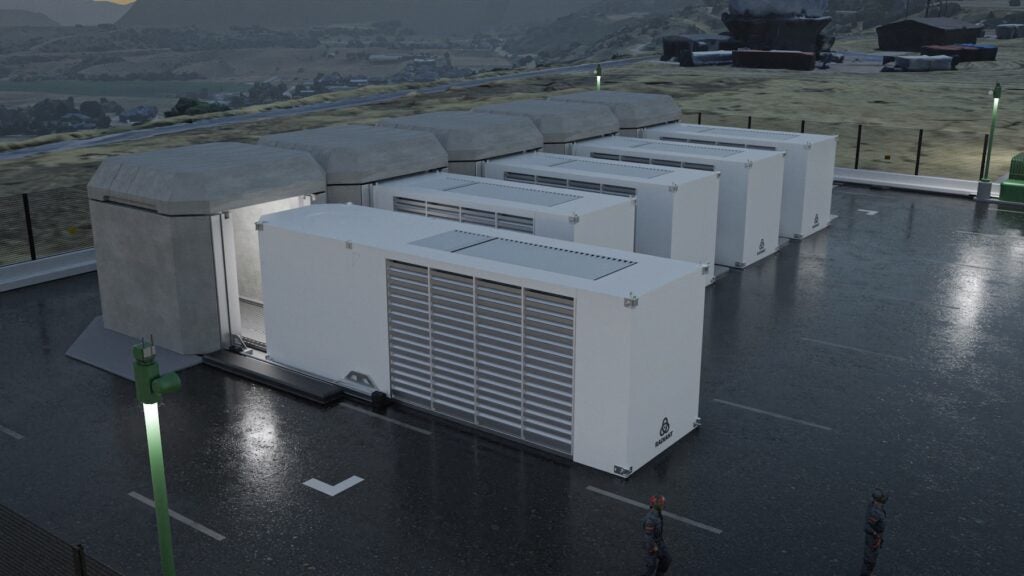

As a result, developers are gravitating toward portfolios that can provide dependable output. Natural gas remains the most common near-term bridge because it can be deployed at meaningful scale on timelines the market can understand. Nuclear has reentered the conversation as a longer-horizon solution, particularly for large campuses seeking durable clean firm capacity. Storage and renewables remain important, but by themselves they often do not satisfy the near-term reliability profile AI campuses want.

This does not mean every project will look the same. The best mix depends on geography, transmission access, fuel logistics, environmental constraints, customer tolerance for risk, and local policy. But it does mean the industry is moving away from the assumption that intermittent supply plus grid access is enough for all high-density compute growth.

The practical question is no longer whether firm power matters. It is how much firm power is needed, where it should sit, and how it should be integrated with the wider system.

Efficiency Matters Because Every Lost Percent Costs Megawatts

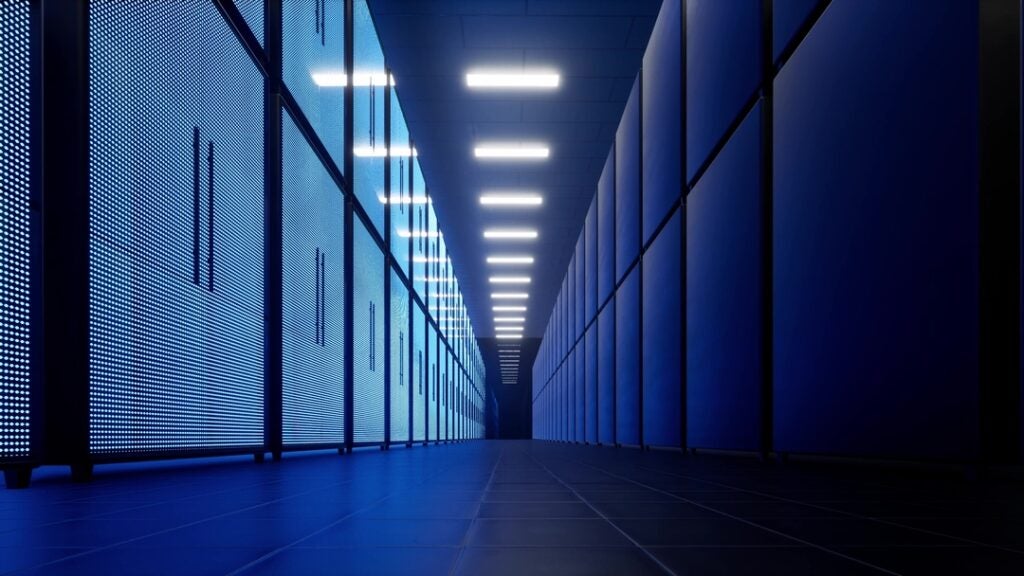

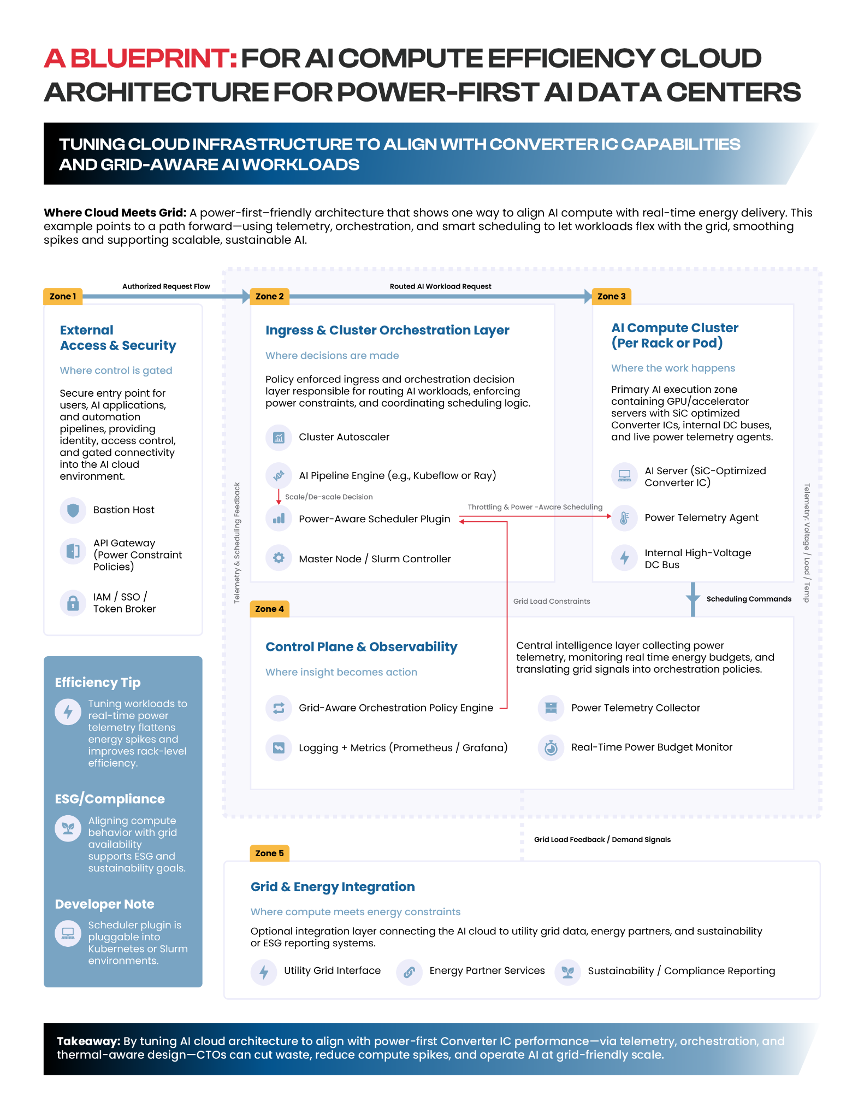

Even with better siting and more generation, waste matters. At AI scale, small efficiency gains compound into meaningful reductions in required capacity, cooling burden, and operating cost (Figure 2). That is one reason power electronics, thermal design, and power-aware orchestration are becoming more important.

Converter integrated circuits (ICs), voltage regulation choices, board layout, airflow design, rack density, and workload scheduling are not glamorous topics, but they determine how much useful work a facility can extract from every megawatt. When compute orchestration is linked to telemetry and real-time power conditions, operators gain more than efficiency. They gain optionality. They can smooth peaks, reduce losses, and make infrastructure decisions with a clearer picture of what the load is actually doing.

This is where the data center conversation becomes directly relevant to the power audience. Energy efficiency is no longer just a sustainability metric or a hardware optimization exercise. It is a capacity strategy. The more intelligently power is converted, distributed, and consumed inside the campus, the less strain is pushed outward onto the grid.

“AI can’t solve all our problems. You don’t need it to. You don’t use a car to drive a single block, or a plane to travel five miles. You choose the right tool for the job,” said Kelsey Hightower, former distinguished engineer with Google. “Yet the way we’re deploying AI in data centers today may be the most inefficient form of computing we’ve ever attempted. Power alone won’t fix that. Real gains will come from purpose-built hardware and workload discipline, the same as it has always been.”

Security and Reliability Are Now the Same Planning Conversation

As AI infrastructure becomes more tightly coupled to generation, substations, controls, storage, and campus-level energy management, the line between cyber risk and power risk gets thinner. A modern AI campus is not just a collection of servers. It is a tightly orchestrated physical-digital system. The operational consequences of outage, misconfiguration, control-system compromise, or supply disruption can travel quickly across that system.

For operators, that means resilience can no longer be treated as a downstream security layer. It has to be built into siting, control architecture, segmentation, vendor strategy, and emergency operations from the start. The same is true for utilities serving these loads. Reliability planning now has to account for the fact that some of the most demanding new customers on the system are also among the most digitally complex.

That convergence creates new obligations, but it also creates an opportunity. Projects designed from day one around power integrity, redundancy discipline, cybersecurity, and operating visibility will be better positioned than those that bolt these concerns on later.

The Opportunity for the Power Sector

The power industry is no longer supporting the AI economy from the sidelines. It is determining its pace. That gives generators, utilities, grid operators, public power entities, and infrastructure investors a central role in what comes next.

The winners will not necessarily be the organizations with the boldest AI rhetoric. They will be the ones that can connect load growth to real infrastructure: deliverable megawatts, credible schedules, resilient campus design, workable interconnection paths, and operating models that recognize both the benefits and the limits of the grid.

Amodei’s image of intelligence emerging from sand is powerful because it captures the wonder of the moment. But in the power sector, wonder is not enough. AI factories do not run on fascination. They run on generation, transmission, conversion, cooling, controls, and disciplined operations.

The machines may be made out of sand. The race will still be won with steel, copper, concrete, and megawatts.

—Michelle Buckner is a former NASA ISSO, cybersecurity architect, cloud infrastructure strategist, and contributing writer covering the intersection of AI, energy, and national security.