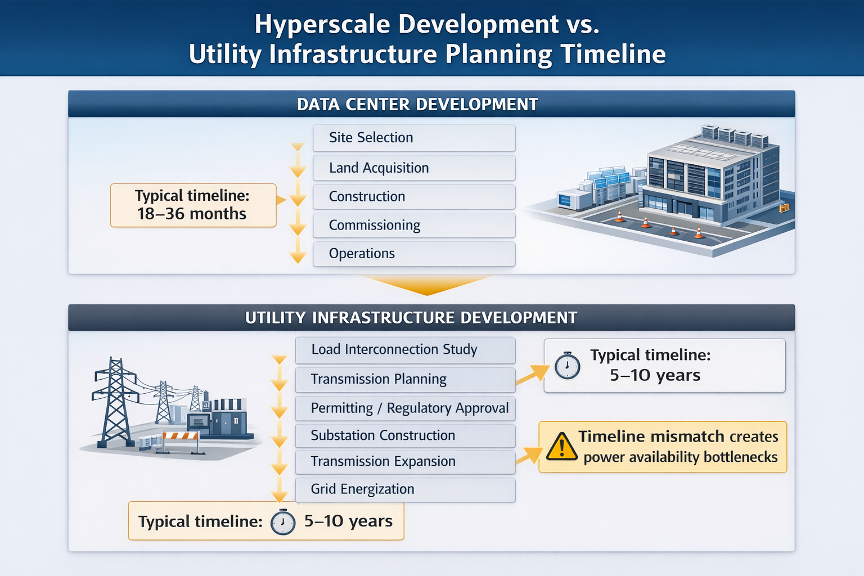

A power paradox is emerging in the hyperscale era: while computing demand is accelerating, power availability is increasingly becoming the constraint that determines where data centers are built, how quickly they can be energized, and how large they can become. In this age of hyperscale data centers, campuses using 300–600 MW of electrical capacity, equivalent to what it takes to power a mid-size city, are becoming a part of the development conversation. The timeline for completing these large data center projects is measured in months, rather than the years associated with the development of traditional industrial facilities. This misalignment has become a key issue, making power availability a controlling factor in where data centers are located and how they are built.

Analysts predict that global data center power consumption could exceed 1,000 TWh annually by the early 2030s, up from the 460 TWh consumed in 2022. The cause of this spike is primarily the rapid expansion of artificial intelligence (AI) and conventional digital services. Already, in the U.S., hyperscale facilities account for a large share of new large-load interconnection requests. Hyperscalers are prompting utilities to reconsider their long-term generation and traditional transmission planning assumptions.

The Infrastructure Challenges

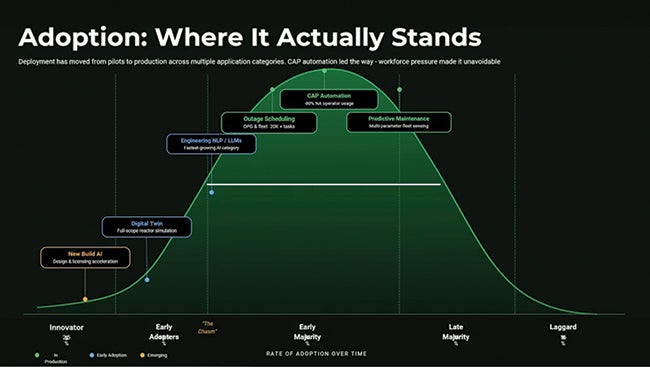

To examine how hyperscale computing is affecting power infrastructure, it’s helpful to look at the following factors: technical grid architecture, AI workload power density, regional grid stress, interconnection queue challenges, and transformer supply constraints.

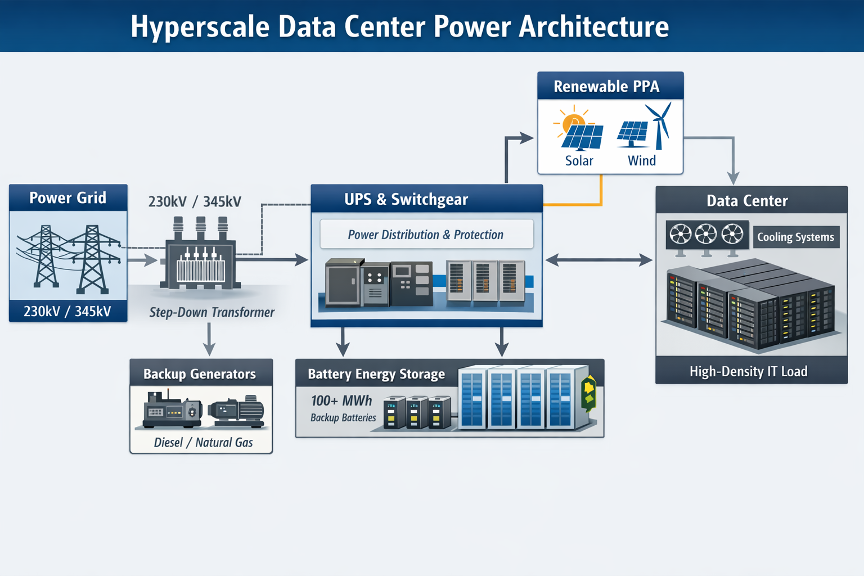

Technical Grid Architecture. A dedicated high-voltage substation connected to multiple 230-kV or 345-kV transmission feeds is needed for a 400-MW hyperscale campus. Before reaching the server racks, large step-down transformers distribute power to medium-voltage switchgear that feeds uninterruptible power supply (UPS) systems, battery storage, and power distribution units. It’s crucial that utilities design connections with N-1 reliability so that if any equipment fails, it will not disrupt the campus.

AI Workload Power Density. AI-optimized facilities are exceeding 50–100 kW per rack, whereas rack densities have historically averaged 5–10 kW per rack. Electrical distribution systems, cooling architectures, and overall facility design are all affected by this significant increase. To manage these large loads, liquid cooling, higher-capacity busways, and advanced power monitoring systems are becoming standard.

Regional Grid Stress. The world’s largest data center market is currently located in Northern Virginia. This area now exceeds 3 GW of data center load, prompting the construction of substations and transmission expansion. The Electric Reliability Council of Texas (ERCOT) has said that the rapid growth of hyperscale data centers is materially impacting load forecasts. EirGrid, Ireland’s grid operator, was effectively forced to restrict new data center connections in the Dublin region due to transmission constraints and electricity supply risks.

Interconnection Queue Challenges. We are seeing a rapid rise in large-load interconnection queues with several U.S. grid operators. The combination of new-generation projects and industrial consumers’ requests for hundreds of megawatts of capacity poses a new challenge for utilities. Long-term load forecasting is now complicated, and utilities must now sequence transmission upgrades years in advance (Figure 1).

Transformer Supply Constraints. Due to global manufacturing constraints, high-capacity power transformers used in hyperscale substations are experiencing procurement lead times exceeding two years. Both utility planning and hyperscale development schedules are impacted by the equipment bottleneck (Figure 2).

The Utility Planning Implications

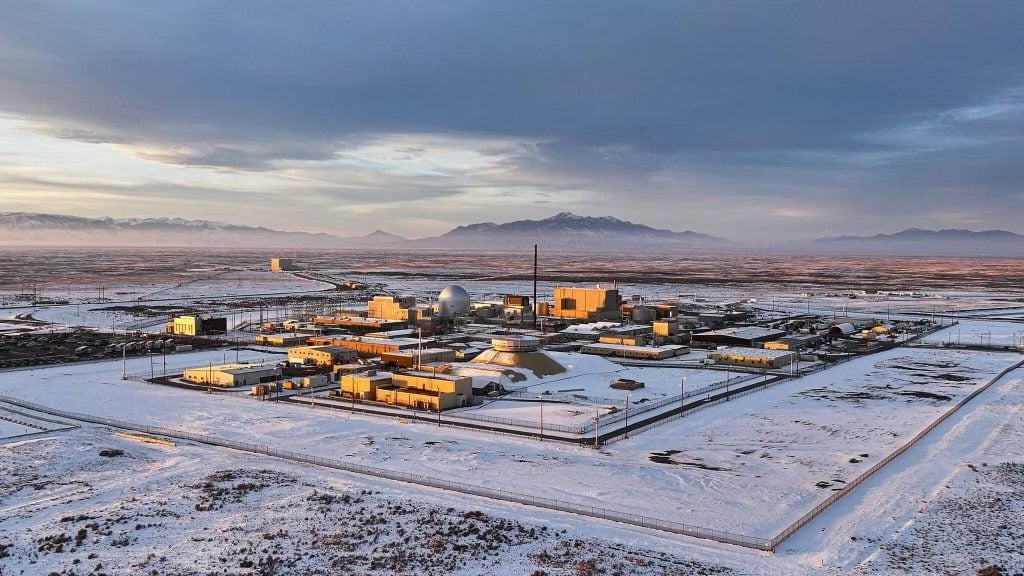

Utilities must prepare for gigawatt-scale digital infrastructure campuses. Sites capable of supporting 1 GW or more of electrical demand, which is approximately the output of a large nuclear generating unit, are already being explored by hyperscale developers. Calls for coordinated expansion of generation capacity, transmission networks, substation infrastructure, and grid flexibility resources are being made. The question is, will that request be met? The answer may reside within behind-the-meter (BTM) solutions, which focus on generating power on-site to circumvent the traditional electric grid.

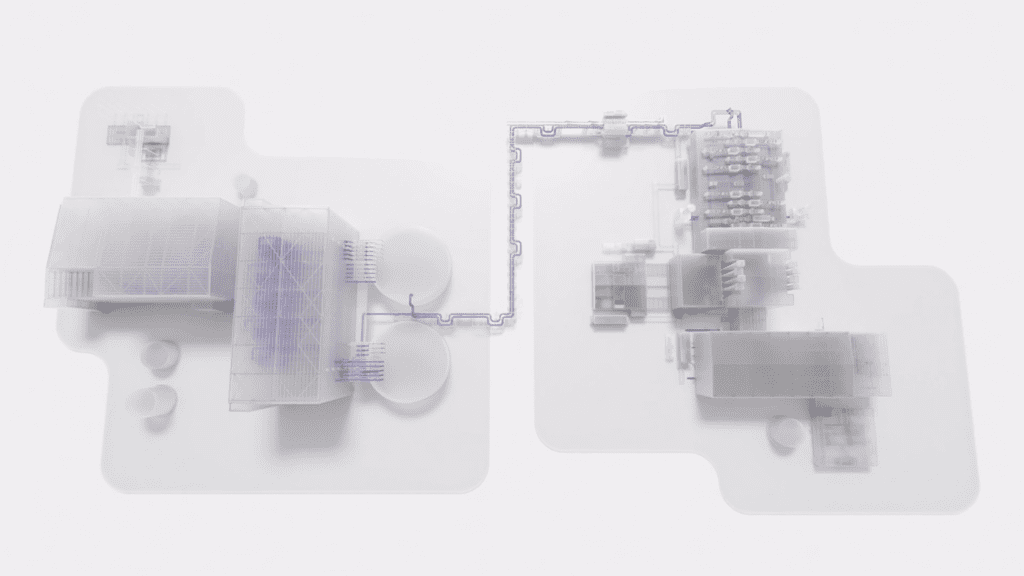

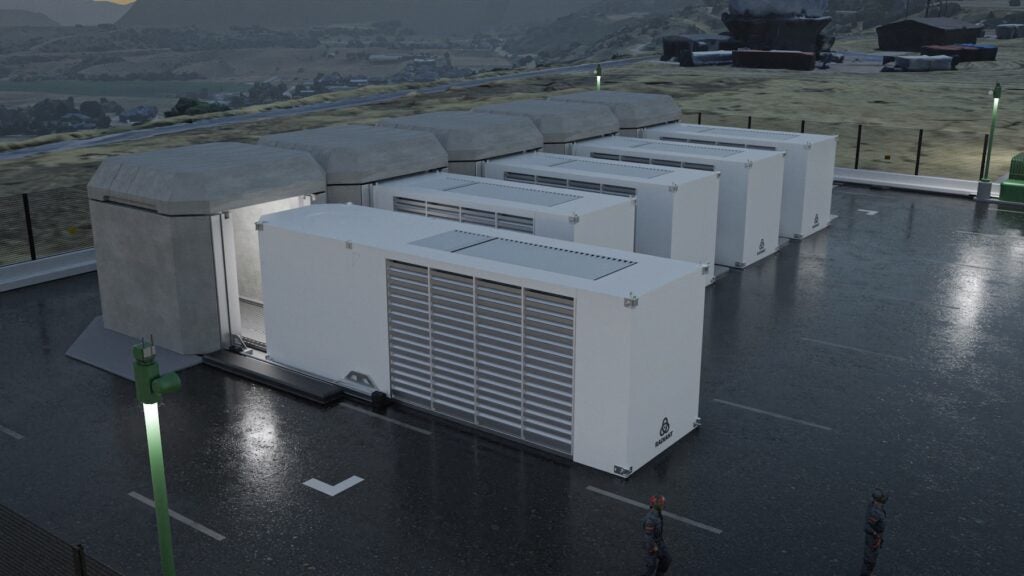

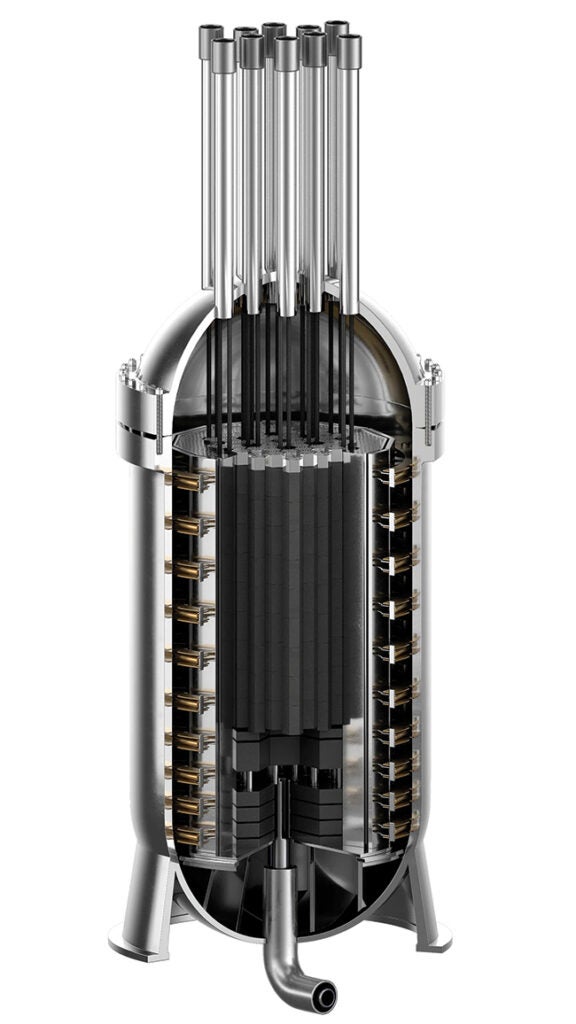

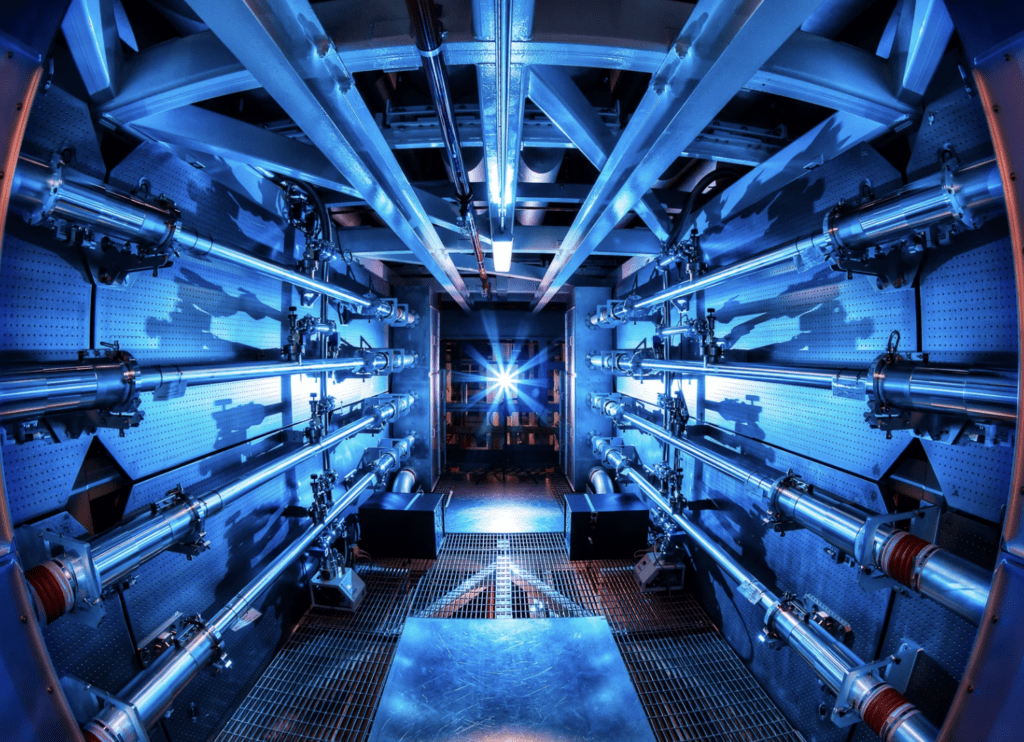

However, these methods have attracted plenty of criticism. One of the more popular BTM methods is deploying small-scale nuclear reactors capable of producing 50–300 MW to provide constant, weather-independent, baseload power. Another alternative method is the hydrogen fuel cell, which converts hydrogen into electricity while also allowing the water byproduct to assist with cooling. Then there is the process of drilling several miles down to pump water into hot rock to create steam that churns turbines, also known as, enhanced geothermal systems (EGS).

Until astro data centers built in space and powered by the limitless energy of the sun become the norm, creative earth-bound solutions are paramount for power supply. There will not be a singular BTM solution that fills the power void, but a combined, thoughtful effort to deploy these methods where it makes the most sense. These deployments will expand the regions builders can target, relieve already strained power grids, and reduce hidden consumer grid taxes by reducing the number of substations and transmission lines.

—Ryne Friedman is an associate at hi-tequity where he supports data center site selection and location analysis. His work focuses on infrastructure readiness, regulatory conditions, and commercial viability across prospective markets.