The conflict in Iran has rekindled a debate that was already building quietly for 24 months in technology circles: energy. Not as a footnote to the artificial intelligence (AI) story, but as a structural constraint at its center.

There is an important structural fact about current AI pricing that rarely surfaces in business conversations: the prices being paid today do not cover costs.

COMMENTARY

According to Axios, OpenAI is projected to burn $14 billion in 2026, up from $8 billion to $9 billion in 2025. Anthropic’s margins, while improving, remain under pressure from higher-than-expected inference costs. This is the “millennial lifestyle subsidy” applied to AI, in the same tradition as venture capital-funded Uber rides or Amazon’s years of zero profit. The gap between what users pay and what it costs to run these models is being funded by investor capital, not sustainable operations.

Both OpenAI and Anthropic are expected to go public. Public market investors will demand margin expansion, not subsidised pricing. The floor is explicitly temporary.

This matters because businesses building on current AI pricing are making a structural assumption that has not yet been tested. A company pricing its product around $3 per million input tokens is working off a cost floor held down by investor capital and intense competitive pressure. When that floor shifts, margin calculations shift simultaneously across every deployment. The question is not whether repricing happens, but when.

The Energy Variable

Beneath the investor subsidy sits a harder and older problem: electricity.

European wholesale electricity prices went from roughly €35/MWh in 2020 to above €500/MWh at their peak in 2022, driven by the Ukraine conflict and the collapse of Russian gas supply. They have since moderated, but IEA (International Energy Agency) data shows EU electricity prices for energy-intensive industries in 2025 still running at roughly twice U.S. levels. Now, with the Middle East conflict disrupting LNG (liquefied natural gas) supply, Wood Mackenzie estimates that roughly 19% of global LNG exports have been removed from markets weekly, pushing European electricity prices sharply upward again.

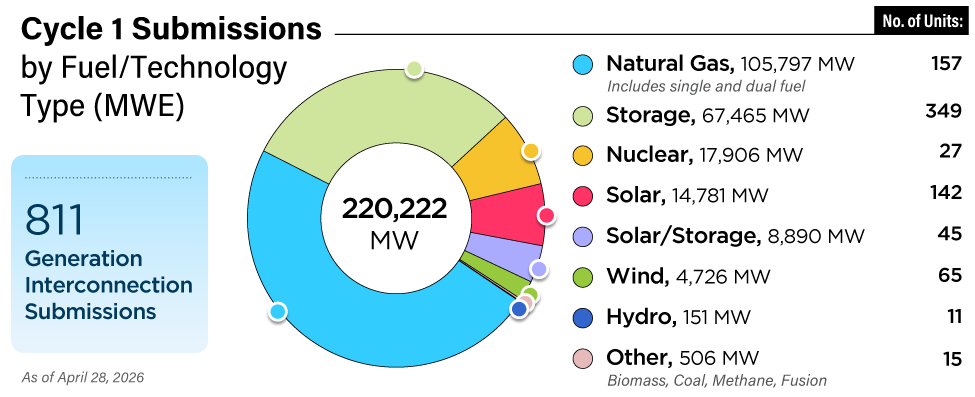

By 2026, total global data center electricity consumption is projected to exceed 1,000 TWh per year, equivalent to the annual consumption of Japan. AI inference is driving that growth, and the more capable the models become, the more compute each query requires.

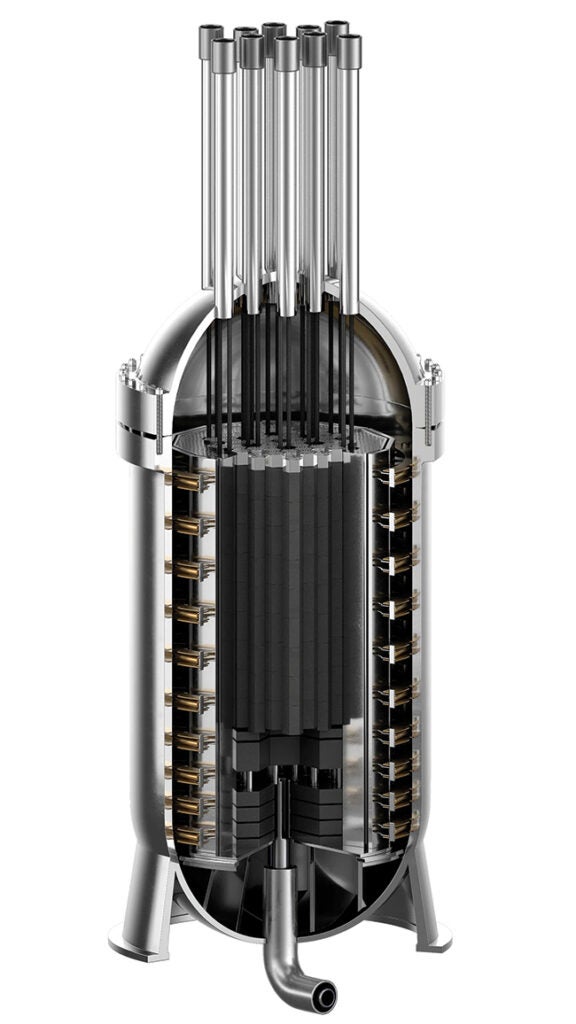

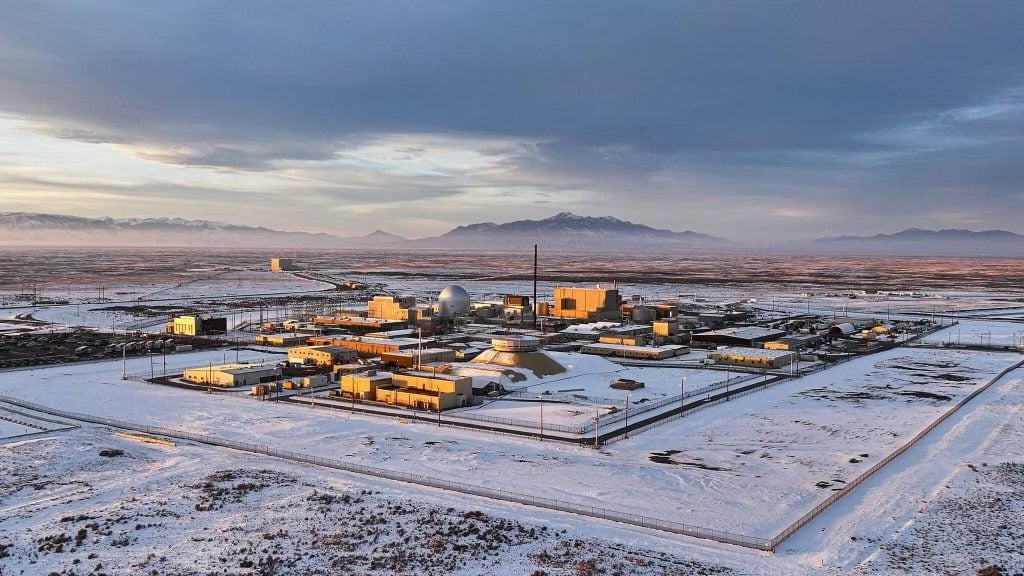

Big Tech understands the exposure. Microsoft reopened Three Mile Island under a 20-year supply agreement to provide nuclear baseload for its data centers. Three Mile Island was the site of the worst nuclear accident in U.S. history in 1979, an event that effectively froze new nuclear construction for four decades. Microsoft reviving it signals how seriously these companies view the energy constraint. Amazon and Google are making equivalent bets on small modular reactor technology that does not yet exist at commercial scale.

The Predictability Problem

The level of energy costs matters less than their predictability. A business can build a viable model around high, stable costs. What breaks cost models is volatility.

Token prices are set by providers who must forecast energy costs years in advance. When those costs are highly volatile, providers face an unpalatable choice: absorb energy cost swings in their margins, or pass volatility through to customers whose own pricing models cannot absorb it either. Right now, investor capital is absorbing the gap. That arrangement will not survive an IPO.

If the cost of running AI at scale is unpredictable beyond an 18-month horizon, it becomes structurally difficult to build the business cases that drive enterprise adoption. The energy problem does not just constrain infrastructure. It constrains adoption itself.

Three Things Worth Tracking For Business Leaders

First, stress-test your AI unit economics against a 2x to 3x increase in compute costs. That may just be a three-year planning horizon exercise.

Second, treat energy access as a location and architecture decision, not just a procurement question. On-premise or edge deployment for appropriate workloads is not only a privacy argument; it is increasingly an economic one.

Third, watch efficiency metrics as closely as capability metrics. The next wave of competitive advantage will not go to the largest model. It will go to the model that delivers adequate performance at the lowest and most predictable cost per query. That is what survives a repricing cycle.

—Jeremy Beaufils is executive director of the Digital Disruption Chair at ESSEC Business School.