Digitalization, driven by big data, the industrial internet of things (IIOT), and even artificial intelligence (AI), has made its mark on the power industry, allowing for greater transparency into operations and helping to ramp up efficiency, reliability, and profitability. However, as some experts point out, power operations are quickly growing ever more complex, and “classical” systems may reach a level of “saturation” in their ability to solve problems. A promising emerging solution is embedded in quantum computing, a relatively new field that leverages the unique rules of quantum mechanics to process information.

Classical computing systems rely on semiconductor technologies like silicon chips that use “bits” as a basic unit of information for the representation of two possible binary states, 0 or 1, which are used in coding, explained Heike Riel, an IBM fellow, who both heads the digital giant’s Science & Technology division and leads its Research Quantum division in Europe. In contrast, processors in quantum computers use quantum systems—atoms, ions, photons, or electrons—and their properties to represent bits. A quantum bit—or a “qubit”—is a quantum particle in a “superposition” that represents a linear combination of all possible configurations of a state between 1 and 0, essentially allowing it to represent more interesting or useful states than a classical bit, she told POWER.

Other quantum properties like interference and entanglement can also influence how quantum computing is applied. When a qubit is measured, it intrinsically “collapses” the superposition state and takes on a classical binary state of either 1 or 0.

“The contributions to a particular outcome from a particular superposition state can be both positive and negative, meaning that the various states in superposition can interfere constructively or destructively to enhance or suppress certain outcomes,” explained Annarita Giani, a senior complex system scientist at GE Research, and Zachary Eldredge, a technology manager at the Department of Energy (DOE), in a recent paper on quantum computing’s renewable energy applications. “As a result, a clever measurement—although it only yields a single result—can hold tell-tale signatures of a vast number of superposed states.”

Entanglement, the phenomenon which Albert Einstein described as a “spooky interaction at a distance,” allows two separate quantum systems to be bound together, no matter how distant they are. These properties offer algorithmic advantages that make them faster than classical alternatives, allowing them to perform more calculations of more significant problems in the same amount of time. Groups of qubits in superposition can also create elaborate, complex, and multidimensional computational spaces, allowing complex problems to be represented in new ways, Riel said.

|

|

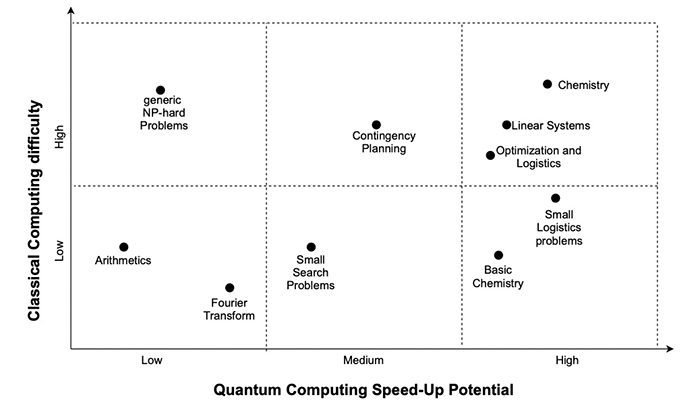

3. This schematic representation illustrates the general landscape of computing problems. The circles represent problems with low, medium, and high potential for quantum speedups compared to their classical difficulty. Source: Department of Energy |

Reaching Where Classical Computers Cannot

“In reality, there are mathematical problems which we cannot solve with classical computers,” she said. These include “several classes of problems,” (Figure 3) including problems “where the complexity increases exponentially with the number of parameters. Some of these problems are also optimization problems,” she said. “For some of these problems, we have learned how to approximate some, but for [others] we just cannot solve them because the approximation is not good enough to give us a good result,” given the scaling limitations of a classical computing system.

“To double the level performance [of a classical system], you must, roughly speaking, double the number of transistors,” Riel said. “In quantum computing, theoretically, you add one qubit and you double the performance because of its exponential scaling. And this enables quantum computing in the future to solve problems which classical computers cannot solve—and will never be able to solve—because of this enormous state space you can create with your qubits.” For example, “to achieve exponential scaling with classical computers, they would need to be as large as the earth,” she said. But representing all the atoms in the universe only needs about 300 qubits.

Despite these remarkable properties, quantum states are quite fragile, and measurement can easily include any unintended interaction with the outside environment, resulting in errors. That’s why quantum computers must be exceptionally well isolated and often cooled to very low temperatures.

While companies like IBM, Google, Rigetti, and D-Wave have recently made “great strides” in producing quantum hardware, no general-purpose, large-scale quantum computer yet exists. And for now, fully error-corrected quantum computers are still years away, say Giani and Eldredge. Industrial research firms meanwhile posit that perhaps bigger roadblocks to quantum deployment are rooted in the lack of qualified workers and the availability of development environments that can address next-generation quantum computers.

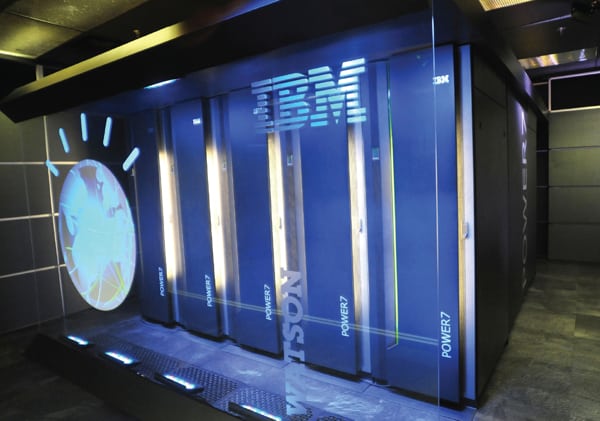

Quantum as a Service

Still, acting on its optimism about the “quantum advantage,” IBM is today already offering access to developing infrastructure via its IBM Quantum division via IBM Cloud. The service also includes its quantum expertise and its Qiskit open-source quantum software developer tools. Far from being dependent on a “continuous and slow” evolution, IBM’s approach has been to align the “quantum advantage” to the “business advantage,” Riel said.

“We started out with 5 qubits in May 2016, and last year in August we demonstrated a quantum processor with 65 qubits. This year, we want to demonstrate 127 qubits in a processor, and next year 433. A year later, we want to break the 1,000-qubit barrier,” she said. “As you can imagine for all these new processors, we have to implement new technology to make them work. It’s a challenging field to be in to build hardware, but in parallel, we’re also building the software to make quantum computers much easier to use. You don’t need to be a quantum physicist as you did five years ago to use a quantum computer today. Today, you only need to know Python and you can log on.”

As Mahesh Sudhakaran, general manager of IBM’s Energy, Sustainability, and Utilities division, explained to POWER in September, several energy firms are already pointedly exploring quantum computing’s potentially broad capabilities to resolve a suite of industrial issues that exist or are emerging. Among IBM’s sizable list of case studies is work with vehicle company Daimler on solutions for electric vehicles; ExxonMobil to refine maritime logistics; and Mitsubishi Chemical to explore new forms of light, as well as to develop lithium-oxygen batteries.

Quantum Solutions for the Power Space

On the power front, IBM recently announced a partnership with E.ON to definitively explore quantum solutions for the German utility’s rapidly decentralizing energy workflow. E.ON will, for example, explore how to best integrate vehicle-to-grid technologies to the distribution grid, focusing on the coordination and control of these widespread smaller systems.

Along with solving optimization problems, which Sudhakaran said has both a localized and larger grid collective potential, quantum computing’s most notable near-term use cases in the rapidly morphing power space will include machine learning and molecule modeling. “I think quantum is going to be transformational in the coming years because machine learning, molecule physics, and optimization are 3D problems that are going to define how clean electrification and sustainability become real,” he said. “In fact, some of the product challenges around sustainability will require quantum as a part of their portfolio to address the challenge.”

E.ON, an Essen-based utility service giant that has markedly transformed its operations through digitalization to keep pace with “multiplying” grid connection points and “fragmenting” energy feed-ins, is notably also exploring other solutions with other quantum computing technology developers, including Microsoft. During a recent E.ON-hosted “Energy Innovation” presentation, Juan Bernabè Moreno, chief data officer and global head of analytics and AI at E.ON, outlined several other potential areas for the quantum computing application. Along with better battery development, decentralized asset management could benefit from large-scale optimization from a better understanding of their dimensional interplay on the grid, he said. He also pointed to the potential development of climate models that better respond to renewable variability, and optimized layouts for power generation assets, and gas and heat networks.

“Quantum systems are especially adequate to model graphs, and all the [power and gas] networks that we have end up being a graph structure,” he said. “Graphs can be mapped into a quantum computer and optimized nicely,” he said.

On the financial front, quantum systems could optimize energy procurement, trading, and hedging. That’s especially important for E.ON, which has divested its large-scale generation sources and must better anticipate prices and power demand, Moreno said. But quantum systems could also boost more effective power regulation through better simulations and perhaps result in more refined engineering to cater to those regulations, he suggested.

Matched for Complexity

Stephen Jordan, a principal researcher at Microsoft Quantum, meanwhile, said his company has explored quantum applications to resolve the complex unit commitment issue, which involves optimally scheduling the startup and shutdown of gas turbines and other generation units to achieve energy supply and demand matches at minimum fuel costs and minimum carbon emissions. “This is a very complicated optimization problem that’s been hard to solve” because the number of production units can be large, and different types of units have different cost constraints, Jordan said. It’s been studied for many years, but conventional methods struggle with it. “We’ve found that methods that we have can parallelize very nicely with these quantum-inspired optimization methods. We can run in parallel up to 50 times faster on the benchmark instances that we’ve looked at compared to the industry-standard solver for these unit commitment problems,” he said.

Giani and Eldredge, in their paper, offer an in-depth look at the possible quantum approaches to power sector issues, weighing their potential against challenges. One approach that could benefit from less-sophisticated hardware (and which the researchers therefore conclude may have a relatively “short-term” developmental timeline) includes quantum annealing—algorithms that “tunnel” into quantum processes to find low-energy solutions to optimization problems. The approach could be used to resolve complex issues related to unit commitment, maintenance scheduling, resource planning, load scheduling, and machine learning, they suggest. While Giani and Eldredge caution that ongoing work is exploratory and significant technical challenges and uncertainties exist, they conclude that “early research in unit commitment and other power systems problems on quantum devices is already quite promising and showing potential quantum advantage on small problems.”

Another interesting approach the researchers highlight is Grover’s algorithm, a technique for finding items in a database that could deliver a quantum “speedup” equal to the square root of the size of the database. “Although this search for a marked item is not directly related to power system reliability, Grover’s algorithm can be extended to perform other types of search problems,” the researchers note. “As an example, if a quantum computer can be programmed to recognize unsafe contingencies in power systems, amplitude estimation algorithms could be used to search through sets of possible scenarios to identify which events might lead to widespread blackouts or other grid instabilities,” they said.

—Sonal Patel is a POWER senior associate editor.