As the demand for artificial intelligence (AI), and data and cloud services increases, the U.S. is seeing a rapid rise in data center development and a surge in demand for power to fuel the additional load. Leading research estimates that data centers could consume up to 9% of U.S. electricity generation annually by 2030, up from 4% in 2023. The ability to source such power is a critical path item to the successful development of a data center and one that can present significant challenges. Below are five key topics to understand about power supply to data centers.

Data Centers Have High Around-the-Clock Energy Demand. Data centers consume an immense amount of power. This power usage is primarily driven by servers/computing and cooling systems, followed by usage for networking equipment and storage drives. While certain types of data centers, such as crypto mining data centers, have the ability to manage energy usage in response to power prices and grid conditions, AI data centers, one of the primary drivers of the explosive demand, are different. Due to constant demand for their use, AI operations have sustained high levels of power needs and demand power availability in excess of 99.9%. Such a level of availability is more than a single generation source (or the grid) can generally provide, and thus redundant power supplies are typically required.

Data Centers Face Significant Challenges in Obtaining Grid-Supplied Power. In order for a data center to obtain power from the grid, it must go through a lengthy grid interconnection process with the system operator. During this process, studies are performed to determine the impact of the proposed load to the grid and whether any upgrades will be needed in order to interconnect such load. The interconnection process is typically a multi-year process, with major system operators experiencing varying interconnection queue timelines, currently ranging from one and a half to four years. This long lead time can be attributed to increasing volume of interconnection requests, outdated grid infrastructure, and lengthy regulatory processes. Despite efforts to mitigate these delays by implementing process reforms, infrastructure upgrades, and increased transparency, the high volume of interconnection requests continues to challenge the capacity of system operators to process interconnection requests efficiently. Assets with existing interconnection agreements or queue positions that can facilitate interconnection of a data center on an expedited time frame (in comparison to a new request) are in high demand.

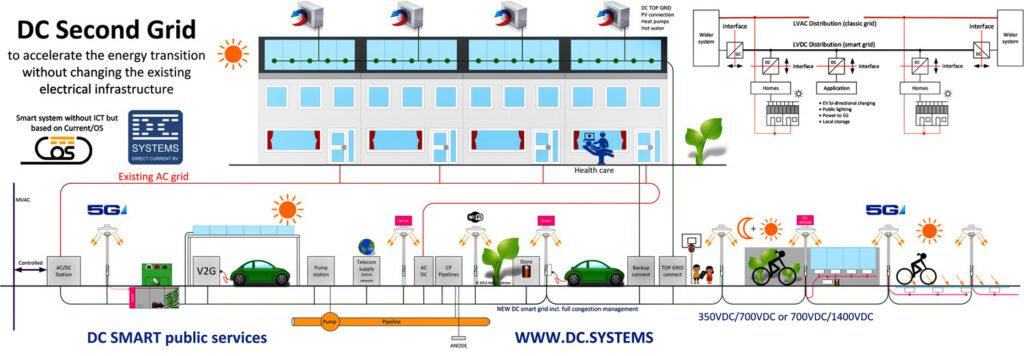

Data Centers Are Increasingly Looking to Alternatives to Grid-Supplied Power. Because of challenges in obtaining grid-supplied power, data centers are increasingly turning to more of an on-site generation model and looking to “behind-the-wire/meter” or “off-grid” solutions for a primary source of power supply.

In a “behind-the-meter” arrangement, a data center and generation source are located behind the same interconnection to the grid, enabling the data center to draw its power directly from the generation source prior to turning to the grid as a backstop. This enables the data center to potentially avoid certain grid-related costs that are assessed to load measured at the grid interconnection point and, if the data center is collocated with existing generation, speed up the interconnection process. In a completely “off-grid” arrangement, a data center is not interconnected to the grid and sources its power from power generation that directly serves the data center. This allows a data center to completely avoid the interconnection process and grid charges, and have complete control of the power sourcing (albeit without the grid as a backstop).

The exact strategy that can be employed, however, depends on the jurisdiction in which the data center is located and a complex layering of federal and state law related to both retail and wholesale power. Notably, a recent Federal Energy Regulatory Commission (FERC) order called into question certain aspects of collocation of data centers at existing generation facilities in FERC jurisdictions (including concerns related to reliability and costs to the consumer) and denied a request to allow an interconnection agreement to be amended; though, such order does not apply to data centers in Texas, where data enter activity has been high.

Data Center Power Supply Cost Varies by Location. Due to the nature of the electric power grid, the price of power fluctuates by region and can even be drastically different within a given region or at a given time of day. This variance is due to local energy policies and power market structures, the availability of natural resources/fuel used to generate power, the infrastructure in place for power generation and distribution, and the local demand for power. Data centers are deploying various strategies to take advantage of the lowest priced power. For example, a number of data centers are collocating behind the meter with renewable generation assets located in areas with depressed power prices (such as areas with high renewable penetration and transmission constraints). Such an arrangement allows the data center to get power on an expedited basis and benefits the renewable generator by ensuring that it receives a minimum price for power, including during times when the generator may otherwise curtail generation due to negative power prices.

Availability of Power Supply Is a Key Driver to Siting a Data Center, but It Is Not the Only Driver. While ability to economically source power is a key driver in the siting of data centers, other important factors include access to water and fiber connectivity. Data centers require substantial amounts of water for cooling purposes, and proximity to a reliable water source can reduce operational costs and ensure the efficient functioning of cooling systems. Data centers also require high-speed internet connectivity, especially those involved in AI and other data-intensive operations; proximity to fiber optic networks ensures low latency and high bandwidth, which are essential for efficient data processing and transmission.

—Cliff Vrielink is a partner, Jessica Adkins is a partner, and Tiph Kugener is an associate at Sidley Austin LLP.